Trends and Gaps in Transformer-Based EEG Modeling: A Review of Recent Developments

DOI:

https://doi.org/10.12928/biste.v8i2.14933Keywords:

Electroencephalography (EEG), Transformer Architecture, Brain-Computer Interface (BCI), Deep Learning, Attention-Based ModelingAbstract

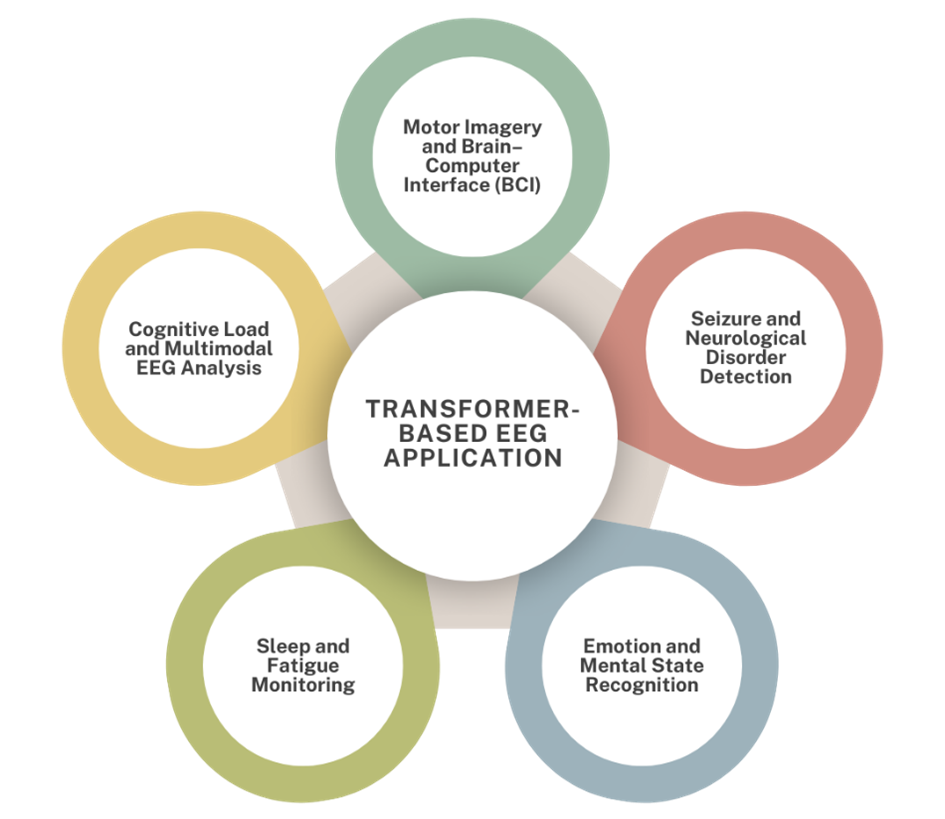

In recent years, Transformer-based deep learning architectures have emerged as a powerful paradigm for modeling EEG signals, offering superior capability in capturing spatial–temporal dependencies compared to traditional convolutional or recurrent networks. However, the diversity of model designs, limited dataset generalization, and lack of standardization have created challenges in evaluating their true potential for real-world applications. This review addresses these issues by systematically examining the evolution, performance, and methodological trends of Transformer-based EEG models published between 2022 and 2024, highlighting both achievements and research gaps. The main contribution of this study is to provide a comprehensive mapping and critical analysis of Transformer architectures applied to EEG classification, feature extraction, and signal decoding tasks. Using the Scopus database, a structured search was conducted following specific inclusion criteria (English, peer-reviewed, open-access journal papers from 2022–2024) and a well-defined query combining EEG and Transformer-related keywords. Data from 63 eligible studies were extracted and categorized according to authorship, dataset, architecture type, EEG application, and evaluation metrics. Results show that hybrid Transformer models dominate recent research, achieving accuracies above 90% in tasks such as motor imagery, emotion recognition, seizure detection, and sleep staging. Pure Transformers like ViT and BERT-like models also demonstrate competitive performance but face scalability and interpretability challenges. In conclusion, Transformer-based EEG modeling is advancing rapidly, yet future efforts must focus on model efficiency, explainability, and benchmark standardization to enable broader clinical and real-world adoption.

References

A. Chaddad, Y. Wu, R. Kateb, and A. Bouridane, “Electroencephalography Signal Processing: A Comprehensive Review and Analysis of Methods and Techniques,” Sensors, vol. 23, no. 14, pp. 6434–6434, 2023, https://doi.org/10.3390/s23146434.

H. Yadav and S. Maini, “Decoding brain signals: A comprehensive review of EEG-Based BCI paradigms, signal processing and applications,” Computers in Biology and Medicine, vol. 196, p. 110937, 2025, https://doi.org/10.1016/j.compbiomed.2025.110937.

A. Farizal, A. D. Wibawa, D. P. Wulandari and Y. Pamungkas, "Investigation of Human Brain Waves (EEG) to Recognize Familiar and Unfamiliar Objects Based on Power Spectral Density Features," 2023 International Seminar on Intelligent Technology and Its Applications (ISITIA), pp. 77-82, 2023, https://doi.org/10.1109/ISITIA59021.2023.10221052.

A. K. Singh and S. Krishnan, “Trends in EEG signal feature extraction applications,” Frontiers in artificial intelligence, vol. 5, 2023, https://doi.org/10.3389/frai.2022.1072801.

A. D. Wibawa, N. Fatih, Y. Pamungkas, M. Pratiwi, P. A. Ramadhani, and Suwadi, “Time and Frequency Domain Feature Selection Using Mutual Information for EEG-based Emotion Recognition,” 2022 9th International Conference on Electrical Engineering, Computer Science and Informatics (EECSI). pp. 19–24, 2022, https://doi.org/10.23919/eecsi56542.2022.9946522.

M. H. Al-Adhaileh, S. Ahmad, A. A. Alharbi, M. Alarfaj, M. Dhopeshwarkar, and T. H. H. Aldhyani, “Diagnosis of epileptic seizure neurological condition using EEG signal: a multi-model algorithm,” Frontiers in Medicine, vol. 12, 2025, https://doi.org/10.3389/fmed.2025.1577474.

Y. Pamungkas, A. D. Wibawa and M. H. Purnomo, "EEG Data Analytics to Distinguish Happy and Sad Emotions Based on Statistical Features," 2021 4th International Seminar on Research of Information Technology and Intelligent Systems (ISRITI), pp. 345-350, 2021, https://doi.org/10.1109/ISRITI54043.2021.9702766.

Y. Wei, Y. Wang, and J. Watada, “A Modular Perspective on the Evolution of Deep Learning: Paradigm Shifts and Contributions to AI,” Applied Sciences, vol. 15, no. 19, p. 10539, 2025, https://doi.org/10.3390/app151910539.

T. Shawly and A. A. Alsheikhy, “A Neural ODE-Enhanced Deep Learning Framework for Accurate and Real-Time Epilepsy Detection,” Computer Modeling in Engineering & Sciences, vol. 143, no. 3, pp. 3033–3064, 2025, https://doi.org/10.32604/cmes.2025.065264.

S. Mahmoud, B. C. Kara, C. Eyupoglu, C. Uzay, M. S. Tosun, and O. Karakuş, “A Survey of Large Language Models: Evolution, Architectures, Adaptation, Benchmarking, Applications, Challenges, and Societal Implications,” Electronics, vol. 14, no. 18, pp. 3580–3580, 2025, https://doi.org/10.3390/electronics14183580.

S. R. Choi and M. Lee, “Transformer Architecture and Attention Mechanisms in Genome Data Analysis: A Comprehensive Review,” Biology, vol. 12, no. 7, p. 1033, 2023, https://doi.org/10.3390/biology12071033.

M. A. Pfeffer, S. Sai, and J. Kwok, “Exploring the frontier: Transformer-based models in EEG signal analysis for brain-computer interfaces,” Computers in Biology and Medicine, vol. 178, pp. 108705–108705, 2024, https://doi.org/10.1016/j.compbiomed.2024.108705.

E. Vafaei and M. Hosseini, “Transformers in EEG Analysis: A Review of Architectures and Applications in Motor Imagery, Seizure, and Emotion Classification,” Sensors, vol. 25, no. 5, p. 1293, 2025, https://doi.org/10.3390/s25051293.

N. Esmi, A. Shahbahrami, G. Gaydadjiev, and P. de Jonge, “TEREE: Transformer-based emotion recognition using EEG and Eye movement data,” Intelligence-Based Medicine, vol. 12, p. 100305, 2025, https://doi.org/10.1016/j.ibmed.2025.100305.

K. Zhao and X. Guo, “PilotCareTrans Net: an EEG data-driven transformer for pilot health monitoring,” Frontiers in Human Neuroscience, vol. 19, 2025, https://doi.org/10.3389/fnhum.2025.1503228.

E. Aanestad, S. Beniczky, H. Olberg, and J. Brogger, “Unveiling variability: A systematic review of reproducibility in visual EEG analysis, with focus on seizures,” Epileptic Disorders, 2024, https://doi.org/10.1002/epd2.20291.

S. Wong, A. Simmons, J. R.‐Villicana, S. Barnett, S. Sivathamboo, P. Perucca, Z. Ge, P. Kwan, L. Kuhlmann, R. Vasa, K. Mouzakis, and T. J O'Brien, “EEG datasets for seizure detection and prediction - A review,” Epilepsia Open, 2023, https://doi.org/10.1002/epi4.12704.

Y. Pamungkas, S. Pratasik, M. Krisnanda, and P. N. Crisnapati, “Optimizing Gated Recurrent Unit Architecture for Enhanced EEG-Based Emotion Classification”, J Robot Control (JRC), vol. 6, no. 3, pp. 1450–1461, 2025, https://doi.org/10.18196/jrc.v6i3.26016.

B. R. Rai, B. K. Rai, A. S. Mamatha, and Nikshitha, “An Analytical Framework for Enhancing Brain Signal Classification through Hybrid Filtering and Dimensionality Reduction,” Healthcare Analytics, p. 100435, 2025, https://doi.org/10.1016/j.health.2025.100435.

E. Vafaei and M. Hosseini, “Transformers in EEG Analysis: A Review of Architectures and Applications in Motor Imagery, Seizure, and Emotion Classification,” Sensors, vol. 25, no. 5, p. 1293, 2025, https://doi.org/10.3390/s25051293.

J. Xie, J. Zhang, J. Sun, Z. Ma, L. Qin, G. Li, H. Zhou, and Y. Zhan, “A Transformer-Based Approach Combining Deep Learning Network and Spatial-Temporal Information for Raw EEG Classification,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 30, pp. 2126–2136, 2022, https://doi.org/10.1109/tnsre.2022.3194600.

R. Hussein, S. Lee, and R. K. Ward, “Multi-Channel Vision Transformer for Epileptic Seizure Prediction,” Biomedicines, vol. 10, no. 7, pp. 1551–1551, 2022, https://doi.org/10.3390/biomedicines10071551.

S. Liu, A. M.-Ragolta, T. Yan, K. Qian, E. P.-Cabaleiro, B. Hu, and B. W. Schuller, “Capturing Time Dynamics From Speech Using Neural Networks for Surgical Mask Detection,” IEEE Journal of Biomedical and Health Informatics, vol. 26, no. 8, pp. 4291–4302, 2022, https://doi.org/10.1109/jbhi.2022.3173128.

Z. R. Murphy, K. Venkatesh, J. Sulam, and P. H. Yi, “Visual Transformers and Convolutional Neural Networks for Disease Classification on Radiographs: A Comparison of Performance, Sample Efficiency, and Hidden Stratification,” Radiology: Artificial Intelligence, vol. 4, no. 6, 2022, https://doi.org/10.1148/ryai.220012.

J. Wang, Y. Xu, J. Tian, H. Li, W. Jiao, Y. Sun, and G. Li, “Driving Fatigue Detection with Three Non-Hair-Bearing EEG Channels and Modified Transformer Model,” vol. 24, no. 12, pp. 1715–1715, 2022, https://doi.org/10.3390/e24121715.

X. Xu, Y. Zhao, R. Zhang, and T. Xu, “Research on Stress Reduction Model Based on Transformer,” KSII Transactions on Internet and Information Systems, vol. 16, no. 12, 2022, https://doi.org/10.3837/tiis.2022.12.009.

Y. He, S. Peng, M. Chen, Z. Yang, and Y. Chen, “A Transformer-Based Prediction Method for Depth of Anesthesia During Target-Controlled Infusion of Propofol and Remifentanil,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 31, pp. 3363–3374, 2023, https://doi.org/10.1109/tnsre.2023.3305363.

Y. Wang, W. Cui, T. Yu, X. Li, X. Liao, and Y. Li, “Dynamic Multi-Graph Convolution-Based Channel-Weighted Transformer Feature Fusion Network for Epileptic Seizure Prediction,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 31, pp. 4266–4277, 2023, https://doi.org/10.1109/tnsre.2023.3321414.

Y. Ma, Y. Tang, Y. Zeng, T. Ding, and Y. Liu, “An N400 identification method based on the combination of Soft-DTW and transformer,” Frontiers in computational neuroscience, vol. 17, 2023, https://doi.org/10.3389/fncom.2023.1120566.

Y. Chen, H. Wang, D. Zhang, L. Zhang, and L. Tao, “Multi-feature fusion learning for Alzheimer’s disease prediction using EEG signals in resting state,” ProQuest, vol. 17, p. 1272834, 2023, https://doi.org/10.3389/fnins.2023.1272834.

X. Wang, A. Liu, L. Wu, L. Guan, and X. Chen, “Improving Generalized Zero-Shot Learning SSVEP Classification Performance From Data-Efficient Perspective,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 31, pp. 4135–4145, 2023, https://doi.org/10.1109/tnsre.2023.3324148.

R. Dang, T. Yu, B. Hu, Y. Wang, Z. Pan, R. Luo, and Q. Wang, “Temporal transformer-spatial graph convolutional network: an intelligent classification model for anti N-methyl-D-aspartate receptor encephalitis based on electroencephalogram signal,” Frontiers in Neuroscience, vol. 17, 2023, https://doi.org/10.3389/fnins.2023.1223077.

H. Yao, T. Liu, R. Zou, S. Ding, and Y. Xu, “A Spatial-Temporal Transformer Architecture Using Multi-Channel Signals for Sleep Stage Classification,” IEEE transactions on neural systems and rehabilitation engineering, vol. 31, pp. 3353–3362, 2023, https://doi.org/10.1109/tnsre.2023.3305201.

Y. Zhou and J. Lian, “Identification of emotions evoked by music via spatial-temporal transformer in multi-channel EEG signals,” Frontiers in Neuroscience, vol. 17, p. 1188696, 2023, https://doi.org/10.3389/fnins.2023.1188696.

X. Chen, J. An, H. Wu, S. Li, B. Liu, and D. Wu, “Front-End Replication Dynamic Window (FRDW) for Online Motor Imagery Classification,” IEEE transactions on neural systems and rehabilitation engineering, vol. 31, pp. 3906–3914, 2023, https://doi.org/10.1109/tnsre.2023.3321640.

Y. Song, Q. Zheng, B. Liu, and X. Gao, “EEG Conformer: Convolutional Transformer for EEG Decoding and Visualization,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 31, pp. 710–719, 2023, https://doi.org/10.1109/tnsre.2022.3230250.

Y. Song, Q. Zheng, Q. Wang, X. Gao, and P. Heng, “Global Adaptive Transformer for Cross-Subject Enhanced EEG Classification,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 31, pp. 2767–2777, 2023, https://doi.org/10.1109/tnsre.2023.3285309.

J. Zhang, K. Li, B. Yang, and X. Han, “Local and global convolutional transformer-based motor imagery EEG classification,” Frontiers in neuroscience, vol. 17, p. 1219988, 2023, https://doi.org/10.3389/fnins.2023.1219988.

L. Hu, W. Hong, and L. Liu, “MSATNet: multi-scale adaptive transformer network for motor imagery classification,” Frontiers in neuroscience, vol. 17, p. 1173778, 2023, https://doi.org/10.3389/fnins.2023.1173778.

H. Wang, L. Cao, C. Huang, J. Jia, Y. Dong, C. Fan, and V. H. C. de Albuquerque, “A Novel Algorithmic Structure of EEG Channel Attention Combined With Swin Transformer for Motor Patterns Classification,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 31, pp. 3132–3141, 2023, https://doi.org/10.1109/tnsre.2023.3297654.

W. Lu, T.-P. Tan, and H. Ma, “Bi-branch Vision Transformer Network for EEG Emotion Recognition,” IEEE Access, vol. 11, pp. 36233-36243, 2023, https://doi.org/10.1109/access.2023.3266117.

X. Zhao, N. Yoshida, T. Ueda, H. Sugano, and T. Tanaka, “Epileptic seizure detection by using interpretable machine learning models,” Journal of Neural Engineering, vol. 20, no. 1, pp. 015002–015002, 2023, https://doi.org/10.1088/1741-2552/acb089.

Z. Tian, B. Hu, Y. Si, and Q. Wang, “Automatic Seizure Detection and Prediction Based on Brain Connectivity Features and a CNNs Meet Transformers Classifier,” Brain sciences, vol. 13, no. 5, pp. 820–820, 2023, https://doi.org/10.3390/brainsci13050820.

O. S. Lih, V. Jahmunah, E. E. Palmer, P. D. Barua, S. Dogan, T. Tuncer, S. García, F. Molinari and, U. R. Acharya, “EpilepsyNet: Novel automated detection of epilepsy using transformer model with EEG signals from 121 patient population,” Computers in Biology and Medicine, vol. 164, pp. 107312–107312, 2023, https://doi.org/10.1016/j.compbiomed.2023.107312.

Z. Jin, Z. Xing, Y. Wang, S. Fang, X. Gao, and X. Dong, “Research on Emotion Recognition Method of Cerebral Blood Oxygen Signal Based on CNN-Transformer Network,” Sensors, vol. 23, no. 20, p. 8643, 2023, https://doi.org/10.3390/s23208643.

N. P. Tigga and S. Garg, “Efficacy of novel attention-based gated recurrent units transformer for depression detection using electroencephalogram signals,” Health Information Science and Systems, vol. 11, no. 1, 2022, https://doi.org/10.1007/s13755-022-00205-8.

N. Gour, T. Hassan, M. Owais, I. I. Ganapathi, P. Khanna, M. L. Seghier, and N. Werghi, “Transformers for autonomous recognition of psychiatric dysfunction via raw and imbalanced EEG signals,” Brain Informatics, vol. 10, no. 1, 2023, https://doi.org/10.1186/s40708-023-00201-y.

S. Oh, Y.-S. Kweon, G.-H. Shin, and S.-W. Lee, “Association Between Sleep Quality and Deep Learning-Based Sleep Onset Latency Distribution Using an Electroencephalogram,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 1806–1816, 2024, https://doi.org/10.1109/tnsre.2024.3396169.

A. A. Khan, R. K. Mahendran, K. Perumal, and M. Faheem, “Dual-3DM3-AD: Mixed Transformer based Semantic Segmentation and Triplet Pre-processing for Early Multi-Class Alzheimer’s Diagnosis,” IEEE transactions on neural systems and rehabilitation engineering, vol. 32, pp. 696-707, 2024, https://doi.org/10.1109/tnsre.2024.3357723.

Z. Zhang, B.-S. Lin, C.-W. Peng, and B.-S. Lin, “Multi-Modal Sleep Stage Classification With Two-Stream Encoder-Decoder,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 2096–2105, 2024, https://doi.org/10.1109/tnsre.2024.3394738.

Y. Wang, S. Zhao, H. Jiang, S. Li, B. Luo, T. Li, and G. Pan, “DiffMDD: A Diffusion-Based Deep Learning Framework for MDD Diagnosis Using EEG,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 728–738, 2024, https://doi.org/10.1109/tnsre.2024.3360465.

B.-H. Lee, J.-H. Cho, B.-H. Kwon, M. Lee, and S.-W. Lee, “Iteratively Calibratable Network for Reliable EEG-Based Robotic Arm Control,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 2793–2804, 2024, https://doi.org/10.1109/tnsre.2024.3434983.

R. Liu, C. Liu, D. Cui, H. Zhang, X. Xu, Y. Duan, Y. Chao, X. Sha, L. Sun, X. Ma, S. Li, and S. Chang, “ADT Network: A Novel Nonlinear Method for Decoding Speech Envelopes From EEG Signals,” Trends in Hearing, vol. 28, p. 23312165241282872-23312165241282872, 2024, https://doi.org/10.1177/23312165241282872.

J. Pradeepkumar, M. Anandakumar, V. Kugathasan, D. Suntharalingham, S. L. Kappel, A. C. De Silva, and C. U. S. Edussooriya, “Toward Interpretable Sleep Stage Classification Using Cross-Modal Transformers,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 2893–2904, 2024, https://doi.org/10.1109/tnsre.2024.3438610.

S. Shi and W. Liu, “B2-ViT Net: Broad Vision Transformer Network with Broad Attention for Seizure Prediction,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 178-188, 2024, https://doi.org/10.1109/tnsre.2023.3346955.

M. Liu, Y. Liu, W. Shi, Y. Lou, Y. Sun, Q. Meng, D. Wang, F. Xu, Y. Zhang, L. Zhang, and J. Leng, “EMPT: a sparsity Transformer for EEG-based motor imagery recognition,” Frontiers in neuroscience, vol. 18, 2024, https://doi.org/10.3389/fnins.2024.1366294.

M. Beiramvand, M. Shahbakhti, N. Karttunen, R. Koivula, J. Turunen, and T. Lipping, “Assessment of Mental Workload Using a Transformer Network and Two Prefrontal EEG Channels: An Unparameterized Approach,” IEEE Transactions on Instrumentation and Measurement, vol. 73, pp. 1–10, 2024, https://doi.org/10.1109/tim.2024.3395312.

S.-J. Kim, D.-H. Lee, H.-G. Kwak, and S.-W. Lee, “Towards Domain-free Transformer for Generalized EEG Pre-training,” IEEE transactions on neural systems and rehabilitation engineering, vol. 32, pp. 482–492, 2024, https://doi.org/10.1109/tnsre.2024.3355434.

G. Ren, A. Kumar, S. S. Mahmoud, and Q. Fang, “A deep neural network and transfer learning combined method for cross-task classification of error-related potentials,” Frontiers in Human Neuroscience, vol. 18, pp. 1394107–1394107, 2024, https://doi.org/10.3389/fnhum.2024.1394107.

W. Chen, Y. Luo, and J. Wang, “Three-Branch Temporal-Spatial Convolutional Transformer for Motor Imagery EEG Classification,” IEEE Access, vol. 12, pp. 79754–79764, 2024, https://doi.org/10.1109/access.2024.3405652.

S. Ke, C. Ma, W. Li, J. Lv, and L. Zou, “Multi-Region and Multi-Band Electroencephalogram Emotion Recognition Based on Self-Attention and Capsule Network,” Applied Sciences, vol. 14, no. 2, pp. 702–702, 2024, https://doi.org/10.3390/app14020702.

W. Lu, L. Xia, T. P. Tan, and H. Ma, “CIT-EmotionNet: convolution interactive transformer network for EEG emotion recognition,” PeerJ Computer Science, vol. 10, pp. e2610–e2610, 2024, https://doi.org/10.7717/peerj-cs.2610.

R. Peng, Z. Du, C. Zhao, J. Luo, W. Liu, X. Chen, and D. Wu, “Multi-Branch Mutual-Distillation Transformer for EEG-Based Seizure Subtype Classification,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 831–839, 2024, https://doi.org/10.1109/tnsre.2024.3365713.

Z. Li, R. Zhang, Y. Zeng, L. Tong, R. Lu, and B. Yan, “MST-net: A multi-scale swin transformer network for EEG-based cognitive load assessment,” Brain Research Bulletin, vol. 206, p. 110834, 2024, https://doi.org/10.1016/j.brainresbull.2023.110834.

W. Ding, A. Liu, L. Guan, and X. Chen, “A Novel Data Augmentation Approach Using Mask Encoding for Deep Learning-Based Asynchronous SSVEP-BCI,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 875–886, 2024, https://doi.org/10.1109/tnsre.2024.3366930.

Y. Qin, W. Zhang, and X. Tao, “TBEEG: A Two-Branch Manifold Domain Enhanced Transformer Algorithm for Learning EEG Decoding,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 1445–1455, 2024, https://doi.org/10.1109/tnsre.2024.3380595.

W. Chen, Y. Liao, R. Dai, Y. Dong, and L. Huang, “EEG-based emotion recognition using graph convolutional neural network with dual attention mechanism,” Frontiers in Computational Neuroscience, vol. 18, p. 1416494, 2024, https://doi.org/10.3389/fncom.2024.1416494.

M. Pang, H. Wang, J. Huang, C.-M. Vong, Z. Zeng, and C. Chen, “Multi-Scale Masked Autoencoders for Cross-Session Emotion Recognition,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 1637–1646, 2024, https://doi.org/10.1109/tnsre.2024.3389037.

J. Luo, W. Cui, S. Xu, L. Wang, H. Chen, and Y. Li, “A Cross-Scale Transformer and Triple-View Attention Based Domain-Rectified Transfer Learning for EEG Classification in RSVP Tasks,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 32, pp. 672–683, 2024, https://doi.org/10.1109/tnsre.2024.3359191.

F. Hu, K. He, M. Qian, X. Liu, Z. Qiao, L. Zhang, and J. Xiong, “STAFNet: an adaptive multi-feature learning network via spatiotemporal fusion for EEG-based emotion recognition,” Frontiers in Neuroscience, vol. 18, p. 1519970, 2024, https://doi.org/10.3389/fnins.2024.1519970.

J. Lee and J.-H. Han, “Bimodal Transformer with Regional EEG Data for Accurate Gameplay Regularity Classification,” Brain Sciences, vol. 14, no. 3, p. 282, 2024, https://doi.org/10.3390/brainsci14030282.

X. Yao, T. Li, P. Ding, F. Wang, L. Zhao, A. Gong, W. Nan, and Y. Fu, “Emotion classification based on transformer and CNN for EEG spatial-temporal feature learning”, Brain Sci., vol. 14, no. 3, p. 268, 2024, https://doi.org/10.3390/brainsci14030268.

Y. Du, H. Ding, M. Wu, F. Chen, and Z. Cai, “MES-CTNet: A Novel Capsule Transformer Network Base on a Multi-Domain Feature Map for Electroencephalogram-Based Emotion Recognition,” Brain Sciences, vol. 14, no. 4, p. 344, 2024, https://doi.org/10.3390/brainsci14040344.

X. Li, T. Haba, G. Cui, F. Kinoshita, and H. Touyama, “The classification of SSVEP-BCI based on ear-EEG via RandOm Convolutional KErnel Transform with Morlet wavelet,” Discover Applied Sciences, vol. 6, no. 4, 2024, https://doi.org/10.1007/s42452-024-05816-2.

H. Ye, M. Chen, and G. Feng, “Research on Fatigue Driving Detection Technology Based on CA-ACGAN,” Brain Sciences, vol. 14, no. 5, p. 436, 2024, https://doi.org/10.3390/brainsci14050436.

P. Busia, A. Cossettini, T. M. Ingolfsson, S. Benatti, A. Burrello, V. J. B. Jung, M. Scherer, M. A. Scrugli, A. Bernini, P. Ducouret, P. Ryvlin, P. Meloni, and L. Benini, “Reducing False Alarms in Wearable Seizure Detection with EEGformer: A Compact Transformer Model for MCUs,” IEEE transactions on biomedical circuits and systems, pp. 1–13, 2024, https://doi.org/10.1109/tbcas.2024.3357509.

Z. Wang, J. Yu, J. Gao, Y. Bai, and Z. Wan, “MutaPT: A Multi-Task Pre-Trained Transformer for Classifying State of Disorders of Consciousness Using EEG Signal,” Brain Sciences, vol. 14, no. 7, pp. 688–688, 2024, https://doi.org/10.3390/brainsci14070688.

G. Feng, H. Wang, M. Wang, X. Zheng, and R. Zhang, “A Research on Emotion Recognition of the Elderly Based on Transformer and Physiological Signals,” Electronics, vol. 13, no. 15, pp. 3019–3019, 2024, https://doi.org/10.3390/electronics13153019.

H. Yeom and K. An, “A Simplified Query-Only Attention for Encoder-Based Transformer Models,” Applied Sciences, vol. 14, no. 19, p. 8646, 2024, https://doi.org/10.3390/app14198646.

M. Seraphim, A. Lechervy, F. Yger, L. Brun, and O. Etard, “Automatic Classification of Sleep Stages from EEG Signals Using Riemannian Metrics and Transformer Networks,” SN Computer Science, vol. 5, no. 7, 2024, https://doi.org/10.1007/s42979-024-03310-5.

S. Basheer, G. Aldehim, A. S. Alluhaidan, and S. Sakri, “Improving mental dysfunction detection from EEG signals: Self-contrastive learning and multitask learning with transformers,” Alexandria Engineering Journal, vol. 106, pp. 52–59, 2024, https://doi.org/10.1016/j.aej.2024.06.058.

D.-H. Shih, F.-I. Chung, T.-W. Wu, S.-Y. Huang, and M.-H. Shih, “Advanced Trans-EEGNet Deep Learning Model for Hypoxic-Ischemic Encephalopathy Severity Grading,” Mathematics, vol. 12, no. 24, p. 3915, 2024, https://doi.org/10.3390/math12243915.

S. A. Holguin‑Garcia, E. Guevara‑Navarro, A. E. Daza‑Chica, M. A. Patiño‑Claro, H. B. Arteaga‑Arteaga, G. A. Ruz, R. Tabares‑Soto, and M. A. Bravo‑Ortiz, “A comparative study of CNN-capsule-net, CNN-transformer encoder, and Traditional machine learning algorithms to classify epileptic seizure,” BMC Medical Informatics and Decision Making, vol. 24, no. 1, 2024, https://doi.org/10.1186/s12911-024-02460-z.

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Yuri Pamungkas, Abdul Karim, Myo Min Aung, Muhammad Nur Afnan Uda, Uda Hashim

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

Authors who publish with this journal agree to the following terms:

- Authors retain copyright and grant the journal right of first publication with the work simultaneously licensed under a Creative Commons Attribution License that allows others to share the work with an acknowledgment of the work's authorship and initial publication in this journal.

- Authors are able to enter into separate, additional contractual arrangements for the non-exclusive distribution of the journal's published version of the work (e.g., post it to an institutional repository or publish it in a book), with an acknowledgment of its initial publication in this journal.

- Authors are permitted and encouraged to post their work online (e.g., in institutional repositories or on their website) prior to and during the submission process, as it can lead to productive exchanges, as well as earlier and greater citation of published work (See The Effect of Open Access).

This journal is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.