Improving Mobile Robot Navigation Using Deep Q-Learning with Diagonal Motion under Dynamic Obstacle Environments

DOI:

https://doi.org/10.12928/biste.v8i2.15637Keywords:

Dynamic Obstacle, Grid Environment, Deep Q-Learning, Mobile Robot Navigation, Discrete Action SpaceAbstract

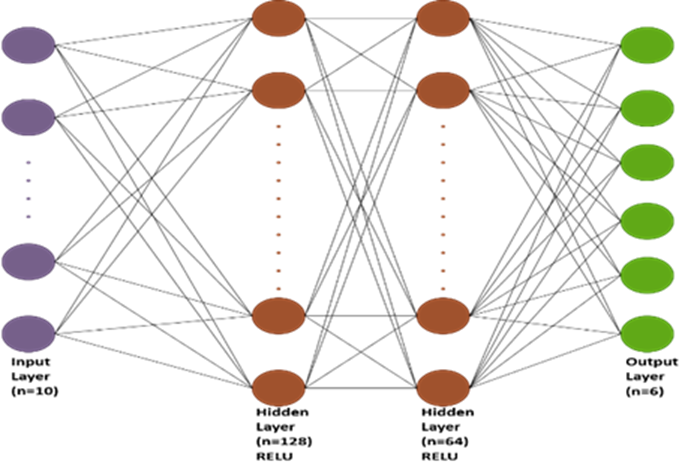

Navigation of the mobile robot in dynamic environments is a significant challenge for researchers due to the uncertainty and rapid changes in obstacle movement. This study proposes a framework for navigating a mobile robot using a deep Q-learning (DQL) algorithm in environments containing both static and dynamic obstacles. The research contribution lies in integrating diagonal motion to enhance manoeuvrability and improve decision-making under dynamic conditions. This paper compares the model with the basic motions model (front, back, right, left). The comparison is conducted across four environments of varying difficulty in terms of the density of obstacles. The model is trained by 3000 episodes using a Deep Q-Network (DQN) with two fully connected hidden layers consisting of 128 and 64 neurons, respectively, employing a greedy policy and utilizes a LiDAR simulator for spatial perception. Both models achieved a 100% success rate in reaching the target without collision in environments A and B, and 90% in environments C and D. However, the proposed approach succeeded in reducing the average number of steps required to reach the goal in all four environments, shortening the path by 10% to 20%. This reduces the time and energy required to reach the goal, which is a significant and crucial advantage in real-world environments. Even when tested at obstacle speeds up to six times the robot's speed, it demonstrated superiority compared to the other model. The model showed very good performance even with noise on the LiDAR reading. In short, the proposed model offers a robust and scalable approach to mobile robot navigation in real-world environments.

References

K. Almazrouei, I. Kamel, and T. Rabie, “Dynamic Obstacle Avoidance and Path Planning through Reinforcement Learning,” Appl. Sci., vol. 13, no. 14, p. 8174, 2023, https://doi.org/10.3390/app13148174.

K. Alnabulseih, A.-E.-R. Abd-EL-Rehim, M. B. Badawi, and R. Elgohary, “Enhancing Disaster Response Efforts with YOLOv8-based Human Detection in Mobile Robotics,” Int. Integr. Intell. Syst., vol. 2, no. 1, 2025, https://doi.org/10.21608/iiis.2025.292458.1036.

G. Fragapane, H.-H. Hvolby, F. Sgarbossa, and J. O. Strandhagen, “Autonomous Mobile Robots in Hospital Logistics,” In IFIP international conference on advances in production management systems, pp. 672–679, 2020, https://doi.org/10.1007/978-3-030-57993-7_76.

S. Liu, P. Chang, W. Liang, N. Chakraborty, and K. Driggs-Campbell, “Decentralized Structural-RNN for Robot Crowd Navigation with Deep Reinforcement Learning,” in 2021 IEEE international conference on robotics and automation (ICRA), pp. 3517–3524, 2021, https://doi.org/10.48550/arXiv.2011.04820.

M. Caruso, E. Regolin, F. Julian, C. Verdù, S. A. Russo, and S. Seriani, “Robot Navigation in Crowded Environments: A Reinforcement Learning Approach,” Machines, vol. 11, no. 2, pp. 1–29, 2023, https://doi.org/10.20944/preprints202212.0233.v1.

T. T. Thuong and V. T. Ha, “Experimental Research on Avoidance Obstacle Control for Mobile Robots Using Q-Learning (QL) and Deep Q-Learning (DQL) Algorithms in Dynamic Environments,” In Actuators, vol. 13, no. 1, p. 26, 2023, https://doi.org/10.20944/preprints202311.0418.v1.

C. Wang, X. Yang, and H. Li, “Improved Q-Learning Applied to Dynamic Obstacle Avoidance and Path Planning,” IEEE Access, vol. 10, pp. 92879–92888, 2022, https://doi.org/10.1109/ACCESS.2022.3203072.

R. Raj and A. Kos, “A Comprehensive Study of Mobile Robot: History, Developments, Applications, and Future Research Perspectives,” Appl. Sci., vol. 12, no. 14, p. 6951, Jul. 2022, https://doi.org/10.3390/app12146951.

N. Demir, P. Demircioğlu, and İ. Böğrekci, “Advancing Industry 4.0 With Ros: A Case Study On Autonomous Mobile Robot Technological Advancements,” Int. J. 3D Print. Technol. Digit. Ind., vol. 8, no. 1, pp. 130–142, 2024, https://doi.org/10.46519/ij3dptdi.1366132.

U. Patel, N. K. Sanjeev Kumar, A. J. Sathyamoorthy, and D. Manocha, “DWA-RL: Dynamically Feasible Deep Reinforcement Learning Policy for Robot Navigation among Mobile Obstacles,” Proc. - IEEE Int. Conf. Robot. Autom., vol. 2021-May, pp. 6057–6063, 2021, https://doi.org/10.1109/ICRA48506.2021.9561462.

Y. F. Chen, M. Everett, M. Liu, and J. P. How, “Socially aware motion planning with deep reinforcement learning,” in 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1343–1350, 2017, https://doi.org/10.48550/arXiv.1703.08862.

T. A. Fahmy, O. M. Shehata, and S. A. Maged, “Trajectory Aware Deep Reinforcement Learning Navigation Using Multichannel Cost Maps,” Robotics, vol. 13, no. 11, p. 166, 2024, https://doi.org/10.3390/robotics13110166.

Z. Zhang, Y. Xue, N. Figueroa and K. Åkesson, "Gradient Field-Based Dynamic Window Approach for Collision Avoidance in Complex Environments," 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 19669-19674, 2025, https://doi.org/10.1109/IROS60139.2025.11246091.

H. Qin, S. Shao, T. Wang, Y. Li, N. Wang, and C. Yao, “An Improved Dynamic Window Approach for Mobile Robot Dynamic Path Planning,” in 2022 12th International Conference on CYBER Technology in Automation, Control, and Intelligent Systems (CYBER), pp. 348–353, 2022, https://doi.org/10.1109/CYBER55403.2022.9907305.

J. Kong and J. Cheng, “Path Planning of Mobile Robots Based on the Fusion of an Improved A* Algorithm and a Dynamic Window Approach,” in 2023 IEEE 6th Information Technology,Networking,Electronic and Automation Control Conference (ITNEC), pp. 968–973, 2023, https://doi.org/10.1109/ITNEC56291.2023.10082080.

J. Guo, L. Liu, Q. Liu, and Y. Qu, “An Improvement of D* Algorithm for Mobile Robot Path Planning in Partial Unknown Environment,” in 2009 Second International Conference on Intelligent Computation Technology and Automation, pp. 394–397, 2009, https://doi.org/10.1109/ICICTA.2009.561.

C. Rösmann, F. Hoffmann and T. Bertram, "Timed-Elastic-Bands for time-optimal point-to-point nonlinear model predictive control," 2015 European Control Conference (ECC), pp. 3352-3357, 2015, https://doi.org/10.1109/ECC.2015.7331052.

Z. Liu, Z. Chang, C. Qiao, H. Chen, and G. Zong, “Improved Bidirectional A* and Time-Elastic Band Algorithm Based Mobile Robot Path Planning,” in 2025 37th Chinese Control and Decision Conference (CCDC), pp. 1–6, 2025, https://doi.org/10.1109/CCDC65474.2025.11090416.

H. Wang, S. Lou, J. Jing, Y. Wang, W. Liu, and T. Liu, “The EBS-A* algorithm: An improved A* algorithm for path planning,” PLoS One, vol. 17, no. 2, pp. 1–27, 2022, https://doi.org/10.1371/journal.pone.0263841.

F. Wang, W. Sun, P. Yan, H. Wei, and H. Lu, “Research on Path Planning for Robots with Improved A* Algorithm under Bidirectional JPS Strategy,” Appl. Sci., vol. 14, no. 13, 2024, https://doi.org/10.3390/app14135622.

L. Qiao, X. Luo, and Q. Luo, “An Optimized Probabilistic Roadmap Algorithm for Path Planning of Mobile Robots in Complex Environments with Narrow Channels,” Sensors, vol. 22, no. 22, p. 8983, 2022, https://doi.org/10.3390/s22228983.

J. Choi, G. Lee, and C. Lee, “Reinforcement learning-based dynamic obstacle avoidance and integration of path planning,” Intell. Serv. Robot., vol. 14, no. 5, pp. 663–677, 2021, https://doi.org/10.1007/s11370-021-00387-2.

M. Likhachev and D. Ferguson, “Planning Long Dynamically Feasible Maneuvers for Autonomous Vehicles,” Int. J. Rob. Res., vol. 28, no. 8, pp. 933–945, 2009, https://doi.org/10.1177/0278364909340445.

Y. Xi, “Research on Autonomous Mobile Robot Navigation Technology Based on Deep Reinforcement Learning,” Highlights Sci. Eng. Technol., vol. 114, pp. 108–113, 2024, https://doi.org/10.54097/krgznc69.

M.-F. R. Lee and S. H. Yusuf, “Mobile Robot Navigation Using Deep Reinforcement Learning,” Processes, vol. 10, no. 12, pp. 1–22, 2022, https://doi.org/10.3390/pr10122748.

A. P. Kalidas, C. J. Joshua, A. Quadir, S. Basheer, S. Mohan, and S. Sakri, “Deep Reinforcement Learning for Vision-Based Navigation of UAVs in Avoiding Stationary and Mobile Obstacles,” vol. 7, no. 4, pp. 1–23, 2023, https://doi.org/10.3390/drones7040245.

Z. Zhang, “Path Planning Algorithms for Mobile Robots Based on Deep Reinforcement Learning,” Highlights Sci. Eng. Technol., vol. 114, pp. 49–55, 2024, https://doi.org/10.54097/fa2r8310.

M. El Wafi, M. A. Youssefi, R. Dakir, and M. Bakir, “Intelligent Robot in Unknown Environments : Walk Path Using Q-Learning and Deep Q-Learning,” Automation, vol. 6, no. 1, p. 12, 2025, https://doi.org/10.3390/automation6010012.

K. Sivayazi and G. Mannayee, “Modeling and simulation of a double DQN algorithm for dynamic obstacle avoidance in autonomous vehicle navigation,” e-Prime - Adv. Electr. Eng. Electron. Energy, vol. 8, p. 100581, 2024, https://doi.org/10.1016/j.prime.2024.100581.

R. A, “Deep Reinforcement Learning with PPO for Autonomous Mobile Robot Navigation Using ROS 2 Framework,” Int. J. Res. Appl. Sci. Eng. Technol., vol. 13, no. 7, pp. 2119–2125, 2025, https://doi.org/10.22214/ijraset.2025.73330.

S. M. Bankar and R. S. Pol, “Review on Optimizing Robotic Navigation with Deep Reinforcement Learning Algorithms,” J. Inf. Syst. Eng. Manag., vol. 10, no. 23s, pp. 226–237, 2025, https://doi.org/10.52783/jisem.v10i23s.3698.

W. Zhu, X. Gao, H. Wu, J. Chen, X. Zhou, and Z. Zhou, “Design of Multimodal Obstacle Avoidance Algorithm Based on Deep Reinforcement Learning,” Electronics, vol. 14, no. 1, pp. 1–20, 2025, https://doi.org/10.3390/electronics14010078.

V. Mnih et al., “Human-level control through deep reinforcement learning,” Nature, vol. 518, no. 7540, pp. 529–533, 2015, https://doi.org/10.1038/nature14236.

A. Gharbi, “A dynamic reward-enhanced Q-learning approach for efficient path planning and obstacle avoidance in mobile robotics,” Appl. Comput. Informatics, 2024, https://doi.org/10.1108/ACI-10-2023-0089.

R. Raj, A. Kos, “Dynamic Obstacle Avoidance Technique for Mobile Robot Navigation Using Deep Reinforcement Learning,” Int. J. Emerg. Trends Eng. Res., vol. 11, no. 9, pp. 307–314, 2023, https://doi.org/10.30534/ijeter/2023/031192023.

F. Hart, M. Waltz, and O. Okhrin, “Missing Velocity in Dynamic Obstacle Avoidance based on Deep Reinforcement Learning,” arXiv preprint arXiv:2112.12465, 2021, https://doi.org/10.48550/arXiv.2112.12465.

Z. Wang and M. Wang, “Mobile Robot Navigation in an Unknown Environment Based on Deep Reinforcement Learning,” in 2024 5th International Conference on Intelligent Computing and Human-Computer Interaction (ICHCI), pp. 630–634, 2024, https://doi.org/10.1109/ICHCI63580.2024.10808044.

X. Gao, L. Yan, Z. Li, G. Wang, and I.-M. Chen, “Improved Deep Deterministic Policy Gradient for Dynamic Obstacle Avoidance of Mobile Robot,” IEEE Trans. Syst. Man, Cybern. Syst., vol. 53, no. 6, pp. 3675–3682, 2023, https://doi.org/10.1109/TSMC.2022.3230666.

P. Jiang, J. Ma, Z. Zhang, and J. Zhang, “Multi-sensor Fusion Framework for Obstacle Avoidance via Deep Reinforcement Learning,” in 2022 2nd International Conference on Electrical Engineering and Control Science (IC2ECS), pp. 153–156, 2022, https://doi.org/10.1109/IC2ECS57645.2022.10088073.

Y. Hu, S. Wang, Y. Xie, Y. Wang, and T. Xiong, “Motion-Prediction-Based Obstacle Avoidance Method for Mobile Robots via Deep Reinforcement Learning,” in IECON 2022 – 48th Annual Conference of the IEEE Industrial Electronics Society, pp. 1–5, 2022, https://doi.org/10.1109/IECON49645.2022.9968446.

Z. Liu, “Research on robot path planning and obstacle avoidance algorithm in dynamic environment based on deep reinforcement learning,” Appl. Comput. Eng., vol. 103, no. 1, pp. 86–93, 2024, https://doi.org/10.54254/2755-2721/103/20241048.

W. Zhu, X. Gao, H. Wu, J. Chen, X. Zhou, and Z. Zhou, “Design of Multimodal Obstacle Avoidance Algorithm Based on Deep Reinforcement Learning,” Electronics, vol. 14, no. 1, p. 78, 2024, https://doi.org/10.3390/electronics14010078.

W. Hu, Y. Zhou, and H. W. Ho, “Mobile Robot Navigation Based on Noisy N-Step Dueling Double Deep Q-Network and Prioritized Experience Replay,” Electronics, vol. 13, no. 12, pp. 1–20, 2024, https://doi.org/10.3390/electronics13122423.

S. Zhang, W. Tang, P. Li, and F. Zha, “Mapless Path Planning for Mobile Robot Based on Improved Deep Deterministic Policy Gradient Algorithm,” Sensors, vol. 24, no. 17, pp. 1–18, 2024, https://doi.org/10.3390/s24175667.

Y. Liu, C. Wang, C. Zhao, H. Wu, and Y. Wei, “A Soft Actor-Critic Deep Reinforcement-Learning-Based Robot Navigation Method Using LiDAR,” Remote Sens., vol. 16, no. 12, p. 2072, 2024, https://doi.org/10.3390/rs16122072.

Y. Zhang and P. Chen, “Path Planning of a Mobile Robot for a Dynamic Indoor Environment Based on an SAC-LSTM Algorithm,” Sensors, vol. 23, no. 24, pp. 2–28, 2023, https://doi.org/10.3390/s23249802.

P. Li, D. Chen, Y. Wang, L. Zhang, and S. Zhao, “Path planning of mobile robot based on improved TD3 algorithm in dynamic environment,” Heliyon, vol. 10, no. 11, p. e32167, 2024, https://doi.org/10.1016/j.heliyon.2024.e32167.

Y. Lin, Z. Zhang, Y. Tan, H. Fu, and H. Min, “Efficient TD3 based path planning of mobile robot in dynamic environments using prioritized experience replay and LSTM,” Sci. Rep., vol. 15, pp. 1–16, 2025, https://doi.org/10.1038/s41598-025-02244-z.

L. Kästner et al., "Arena-Rosnav: Towards Deployment of Deep-Reinforcement-Learning-Based Obstacle Avoidance into Conventional Autonomous Navigation Systems," 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 6456-6463, 2021, https://doi.org/10.1109/IROS51168.2021.9636226.

D. Martinez, L. Riazuelo, and L. Montano, “Deep reinforcement learning oriented for real world dynamic scenarios,” arXiv preprint arXiv:2210.11392, 2022, https://doi.org/10.48550/arXiv.2210.11392.

K. A. Al-Zubaidi, A. M. Alkamachi, and B. Ansaf, “Deep Q-Learning with Custom Reward Shaping for Mobile Robot Navigation in Grid Dynamic Environments,” Int. J. Robot. Control Syst., vol. 5, no. 5, pp. 2454–2468, 2025, https://doi.org/10.31763/ijrcs.v5i5.2204.

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Karam A Al-Zubaidi, Ahmed M Alkamachi, Bahaa Ansaf

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

Authors who publish with this journal agree to the following terms:

- Authors retain copyright and grant the journal right of first publication with the work simultaneously licensed under a Creative Commons Attribution License that allows others to share the work with an acknowledgment of the work's authorship and initial publication in this journal.

- Authors are able to enter into separate, additional contractual arrangements for the non-exclusive distribution of the journal's published version of the work (e.g., post it to an institutional repository or publish it in a book), with an acknowledgment of its initial publication in this journal.

- Authors are permitted and encouraged to post their work online (e.g., in institutional repositories or on their website) prior to and during the submission process, as it can lead to productive exchanges, as well as earlier and greater citation of published work (See The Effect of Open Access).

This journal is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.