ISSN: 2685-9572 Buletin Ilmiah Sarjana Teknik Elektro

Vol. 8, No. 2, April 2026, pp. 344-379

Language as the Semantic Bridge in Audio, Music, and Multimodal Artificial Intelligence: A Systematic Review

(2021-2025)

Novia Ratnasari, Aji Prasetya Wibawa

Department of Electrical Engineering and Informatics, Universitas Negeri Malang, Indonesia.

ARTICLE INFORMATION |

| ABSTRACT |

Article History: Received 08 December 2025 Revised 04 March 2026 Accepted 30 March 2026 |

|

This study presents a systematic review of research in Audio, Music, and Multimodal Artificial Intelligence published between 2021 and 2025, investigating how language operates as a semantic mediation layer between acoustic signals and high-level meaning. The research addresses the fragmentation of existing surveys by introducing a Domain; Modality; Technique; Task (D-M-T-T) taxonomy that systematically differentiates domain focus, modality configuration, modeling techniques, and task objectives. The research contribution is a structured analytical framework that offers a more granular perspective than architecture-centered surveys of Multimodal Large Language Models. Following the PRISMA 2020 protocol, 2,197 Scopus-indexed publications were screened, yielding 369 eligible studies. Language is defined as a representational layer encompassing natural language and structured symbolic encodings that connect acoustic embeddings to semantic interpretation and generative reasoning. Multimodal systems aligning audio and vision without explicit textual grounding are included and analyzed as non-linguistic alignment architectures within the taxonomy. The findings reveal a shift from recognition-based models toward unified multimodal systems in which language conditions alignment, reasoning, and generative synthesis. For instance, text-conditioned music generation demonstrates how linguistic prompts guide compositional structure and emotional expression. These developments reflect an epistemic transition from signal recognition paradigms to language-mediated generative intelligence. Emerging gaps include limited explainability in generative audio systems and insufficient low-resource cross-modal semantic grounding. |

Keywords: Systematic Literature Review; PRISMA Framework; Audio and Music Artificial Intelligence; Natural Language Processing; Multimodal Integration |

Corresponding Author: Aji Prasetya Wibawa, Universitas Negeri Malang, Jalan Semarang No. 5, Malang, Jawa Timur 6514, Indonesia. Email: aji.prasetya.ft@um.ac.id |

This work is licensed under a Creative Commons Attribution-Share Alike 4.0

|

Document Citation: N. Ratnasari and A. P. Wibawa, “Language as the Semantic Bridge in Audio, Music, and Multimodal Artificial Intelligence: A Systematic Review (2021-2025),” Buletin Ilmiah Sarjana Teknik Elektro, vol. 8, no. 2, pp. 344-379, 2026, DOI: 10.12928/biste.v8i2.15564. |

- INTRODUCTION

Sound represents one of the most fundamental forms of human expression [1][2] and perception [3][4], encompassing linguistic [5], emotional [6][7], identity [9], and cultural dimensions [10][11]. The evolution of artificial intelligence [12][13], digital signal processing [14], and music knowledge has contributed to the emergence of interdisciplinary developments in digital technology, particularly in Artificial Intelligence for Audio and Music (AI Audio & Music) [15]. Within this field, computational modelling [16] enables systems to recognize [17], interpret, and generate new sounds [18] through signal processing techniques [19] and Natural Language Processing (NLP) [20][21]. These developments reflect the interaction between human cognition and machine intelligence, where sound is treated not only as a physical signal but also as a carrier of meaning. The field of AI in Audio & Music has undergone an epistemic transition from recognition-based paradigms to language-mediated generative intelligence [22][23].

The initial evolution was dominated by architectures such as Convolutional Neural Networks (CNN) [24]-[26] and Recurrent Neural Networks (RNN) [27][28] for speech [29] and music recognition tasks [30]. These methods quickly evolved into learning models that can extract semantic structure [31][32] from audio signals without manual annotation, including Transformers [33][34] and self-supervised models such as Wav2Vec2 [35][36], and MusicBERT [37]. Furthermore, generative architectures utilize Variational Autoencoders (VAE) [38][39]. Generative Adversarial Networks (GAN) [40][41], and diffusion models to enrich synthesis analysis [42][43] These models generate sound [44][45] and music patterns [46], while also enabling the detection of meaning and emotion [47][48]. NLP has shifted beyond its traditional linguistic [49] domain to function as an epistemic bridge that is, a structured representational layer connecting acoustic perception, musical expression [50][51], and higher-level semantic reasoning [52][53]. Through a learning and cross modal process, several studies have been used to construct a space within which text [54], sounds [55], and music interact to generate meaning [56]. This paradigm explains the rapid expansion of technology from signal based computing [57][58] to meaning infused cognition [59][60]. Language is positioned as a semantic bridge that enables systems to understand the context of sound output [61]. Within this broader technological evolution, the present study specifically examines how Natural Language Processing reconfigures these systems into semantically mediated and language-driven frameworks.

However, this epistemic transition toward semantically mediated and language-driven systems also introduces new structural and methodological complexities. During this epistemic transition, new challenges emerge at both epistemic and methodological levels, including dataset bias [62], limited cross-cultural generalization [63], and insufficient evaluation trials [64]This transition foregrounds the importance of meaning and emotional resonance [65] in intelligent systems [66]. Despite rapid advances in models [67] and architectures, research in Audio and Music AI remains conceptually fragmented. Many studies prioritize performance optimization without examining how language reshapes the epistemic foundations of sound-based intelligence [68]. As a result, a longitudinal and integrative synthesis of NLP’s evolving role remains limited. This limitation constrains a broader theoretical understanding of how semantic mediation transforms intelligent audio systems and hinders the development of more coherent, context-aware, and human-centered AI applications

Against this backdrop, the present study aims to interpret the conceptual and methodological evolution of NLP [69] within Audio and Music AI between 2021 and 2025. To achieve this, the study employs a Systematic Literature Review (SLR) guided by the PRISMA [70] framework and structured under the Domain, Modality, Technique, Task (D-M-T-T) taxonomy. The analysis maps the architectural, methodological, and epistemic dynamics shaping the development of Audio & Music AI. It also clarifies the position of NLP as a semantic mechanism that unifies perception, expression, and understanding across modalities.

The overarching goal is to formulate a conceptual foundation for developing intelligent systems that not only recognize and generate sound but also interpret meaning, emotion, and aesthetic value mirroring how humans experience and understand the world through language and music. In this study there are 5 research questions, including:

RQ1 | : | How have NLP based approaches and methodologies evolved within Audio and Music AI research between 2021 and 2025, and in what ways does language function as a semantic bridge across modalities? |

RQ2 | : | Which NLP modalities, techniques, and architectures are most dominant across studies in Audio and Music AI, and how have they shifted from signal processing toward semantic and affective representation learning? |

RQ3 | : | What datasets and evaluation metrics are utilized in NLP based Audio and Music AI research, and how consistent and relevant are they across years and domains? |

RQ4 | : | How has Audio and Music NLP been applied across various domains such as healthcare, creative industries, and human AI interaction and to what extent has it contributed to cross disciplinary innovation and multimodal generation? |

RQ5 | : | What methodological challenges, technical limitations, and future research opportunities exist in advancing Audio and Music NLP toward more contextual, coherent, and semantically affective intelligent systems? |

These research questions are designed to clarify how NLP has reshaped the conceptual, methodological, and architectural foundations of Audio and Music AI. By examining not only dominant models and datasets but also epistemic shifts and cross-domain applications, the study situates language as a central mechanism in the evolving intelligence of sound-based systems. By addressing these research questions, this study not only aims to consolidate existing knowledge but also to identify the conceptual and methodological gaps that continue to shape the research trajectory of Audio & Music AI. The Systematic Literature Review (SLR) approach provides a rigorous and structured framework to synthesize findings, highlight dominant trends, and trace the interaction between technological development and theoretical understanding. This review further emphasizes the need to balance acoustic and semantic dimensions, as several areas of musical meaning representation remain relatively underexplored yet hold substantial potential for future scientific inquiry. The research contribution of this study is a longitudinal and conceptually grounded synthesis of NLP’s evolving role in Audio and Music AI, positioning language as a central epistemic mechanism in multimodal intelligence.

- LITERATURE REVIEW

The PRISMA (Preferred Reporting Items for Systematic Reviews and Meta Analyses) framework was used as a methodological guideline to improve clarity, comprehensiveness, and transparency in meta analyses. The PRISMA stages used include: a). Identification; b). Screening; c). Eligibility Assessment; d). Inclusion/Exclusion; and e). Final Selection [71]. A Systematic Literature Review (SLR) was conducted on publications indexed in the Scopus database between 2021 and 2025. The PRISMA stages provide a systematic overview of the evolution, taxonomy, and research gaps in the field of NLP in Audio and Music (AI Audio & Music).

- Eligibility, Planning, and Selection

PRISMA was used to ensure methodological accuracy, process transparency, and analytical coherence. At this stage, the research design, inclusion constraints, and article eligibility criteria were established as the basis for literature selection. The primary objective of this stage was to formulate a conceptual map of Audio & Music AI research, focusing on four analytical dimensions: a). Domain; b). Modality; c). Technique; and d). Task (DMT). These dimensions serve as structural pillars for analyzing how linguistic, acoustic, and computational modalities intersect in this field. During the planning stage, a continuous literature search process is carried out to maintain research consistency by applying several stages, including: a) Identification; b) Filtering; and c) Final Inclusion. This aims to select and identify relevant research that is high-quality, focused, valid, and representative.

- Conditions for Inclusion (C)

Inclusion criteria (C1-C3) form a structured framework for assessing each article’s eligibility. The sequential evaluation ensures that only studies meeting every specified condition are included in the final dataset.

C1 | : | Search Condition Articles must be written in English and contain the terms “Natural Language Processing,” “Text Classification,” “Music,” “Song,” “Lyric,” or “Voice” in the title, abstract, or keywords, retrieved from the Scopus database. |

C2 | : | Screening Condition Articles must be research journals or conference proceedings that include empirical experiments, dataset usage, model development, or evaluation within the context of AI applied to audio, music, or sound. |

C3 | : | Eligibility Condition Eligibility requirements must meet several requirements, including: a). Empirical results; b). Model implementation; c). Dataset utilization; and d). Evaluation metrics in the field of Artificial Intelligence (AI) Audio and Music with full access to files. |

- Restrictions for Inclusion (R)

The Restrictions for Inclusion (R) were applied after the initial search process to ensure the quality, consistency, and methodological rigor of the dataset in accordance with the PRISMA standards. These restrictions functioned as an additional filtering layer to refine the dataset and to exclude studies that, while initially relevant, did not fully meet the analytical or conceptual requirements of this review.

R1 | : | Articles that could not be accessed in full text form whether open or restricted access were excluded. |

R2 | : | Studies not written in English were excluded. |

R3 | : | Articles lacking an abstract or summary were not included. |

R4 | : | Studies focusing solely on language teaching or pure linguistics were excluded. |

R5 | : | After quality screening, conference proceedings, workshop papers, and Q3-Q4 journals were excluded. The final dataset included only Q1-Q2 journal articles with clearly defined empirical validation. |

The initial stage of this Systematic Literature Review (SLR) began with the application of a search string developed according to Condition C1. This process was conducted using the Scopus database, which is widely recognized as one of the most credible scientific repositories in the fields of computer science, engineering, and artificial intelligence. Scopus was selected because it provides access to a broad range of high-quality, peer-reviewed publications, while also enabling categorization by journal quartile (Q-Q4) and research domain classification.

In the initial search phase, the two core components of this study, namely "Natural Language Processing" and "Audio & Music Artificial Intelligence", served as the conceptual foundation for constructing the search query. In the adequacy of comprehensive findings, the search was not limited to keywords, but was not limited to the same terms with the same meaning, presented as follows:

“(TITLE-ABS-KEY ("Natural LANGUAGE processing") OR TITLE-ABS-KEY ("Text Classification") AND TITLE-ABS-KEY ("music") OR TITLE-ABS-KEY ("song") OR TITLE-ABS-KEY ("lyric") OR TITLE-ABS-KEY ("voice")) AND PUBYEAR > 2020 AND PUBYEAR < 2026 AND (LIMIT-TO (SRCTYPE, "j") OR LIMIT-TO (SRCTYPE, "p")) AND (LIMIT-TO (DOCTYPE, "ar") OR LIMIT-TO (DOCTYPE, "cp")) AND (LIMIT-TO (LANGUAGE, "English"))”

- Study Selection Process

The study selection phase aimed to ensure that each analyzed publication was both relevant and scientifically robust. The process began with an initial screening of publications retrieved from Scopus, where articles were filtered through an examination of titles, keywords, and abstracts to assess their alignment with the scope of Audio and Music AI. Articles with uncertain relevance were not immediately excluded but were reevaluated through full text reading to examine their methodology, data integrity, and conceptual contribution. This approach ensured that potentially valuable studies were not overlooked during the initial filtering phase.

- Data Collection

The data collection process in this Systematic Literature Review (SLR) was carried out in a planned and sequential manner to obtain literature most relevant to the research topic. The primary objective was to address the formulated research questions while establishing a strong conceptual foundation grounded in empirical evidence. The inclusion and exclusion process implemented research design, publication type, language use, and topic relevance in the Scopus database, with an initial search totaling 2,197 publications. The next stage was the filtering process based on title, abstract, and content, which yielded 843 articles. The final results of this filtering stage, which yielded quality, and further eligibility screening, yielded 369 journal articles with quartiles Q1-Q2.

- Data Extraction and Quality Assessment

During data extraction, each article meeting the inclusion criteria was examined in depth to document key information and ensure comparability across studies. The extracted fields covered study descriptors, domain classification, methodological details, task typology, datasets, evaluation metrics, and salient findings or limitations. Subsequently, a structured quality assessment was performed to validate the reliability and interpretive robustness of the dataset. During data extraction, each article meeting the inclusion criteria was analyzed in depth to document the following information:

- Study characteristics;

- Research domain focused on audio, music, multimodal, and NLP;

- Techniques and algorithms used;

- Task types such as classification;

- Datasets used, including names and sizes;

- Evaluation metrics;

- Key findings and limitations reported by each study;

A quality assessment was conducted to ensure the validity and reliability of the analytical results. This evaluation considered four primary indicators:

- Domain relevance, the degree to which each study aligns with the scope of Audio and Music AI;

- Methodological clarity, the transparency of model descriptions, evaluation techniques, and experimental outcomes;

- Empirical evidence, the extent to which the study employs datasets and quantitative validation; and

- Document accessibility, the availability of a complete, verifiable manuscript.

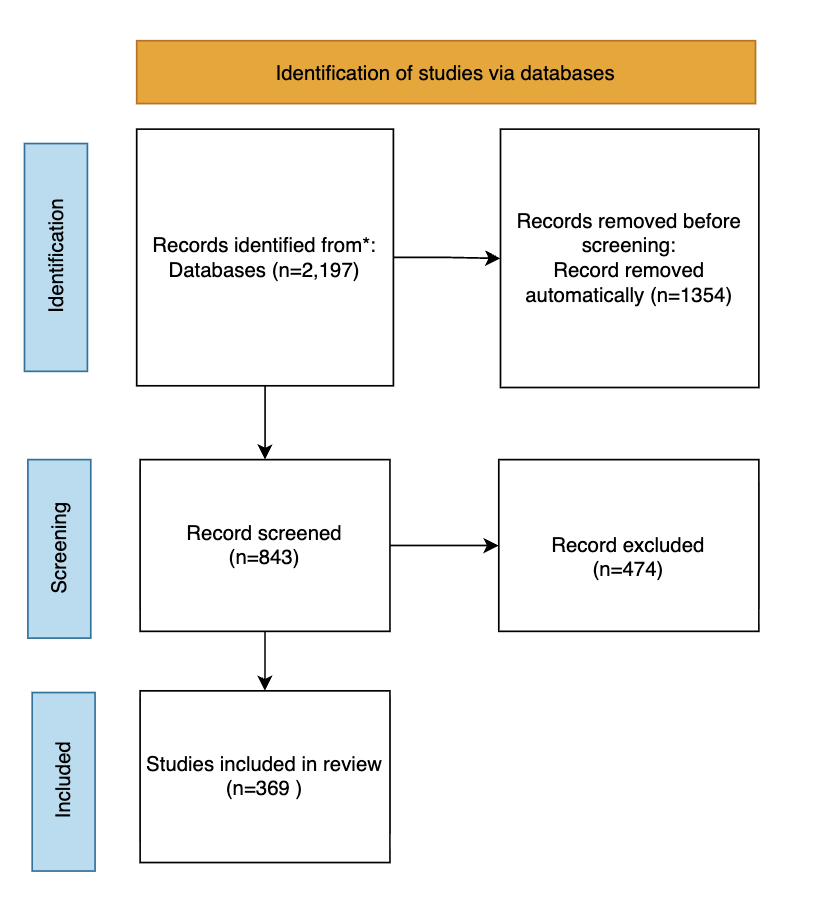

This stage ensured that all included publications contributed verifiable scientific evidence and supported the overall objectives of the review. Throughout the process, data management and documentation practices were maintained rigorously using tools such as Microsoft Excel and Mendeley to record metadata, track screening status, and prevent duplication. The implementation of a systematic data governance protocol not only enhanced the transparency and reproducibility of the research process but also reinforced the scientific integrity of this review. Figure 1 presents a flowchart of the PRISMA 2020 [72] article screening and selection process. The initial identification phase yielded 2,197 publications, and the final selection phase yielded 369 articles from Q1-Q2 journals.

Figure 1. PRISMA Flow Diagram of Study Identification, Screening, and Inclusion (2021-2025)

Figure 1. PRISMA Flow Diagram of Audio and Music AI Study Selection Process (Scopus 2021-2025). This Figure1 illustrates the systematic identification and selection of studies based on the PRISMA 2020 framework. References to the PRISMA flow diagram have been explicitly integrated within the relevant methodological stages to improve narrative continuity. The figure caption has been revised to clearly describe each selection phase (identification, screening, eligibility, and inclusion), thereby enhancing interpretability and alignment with PRISMA reporting standards. A total of 2,197 records were initially identified from the Scopus database using the keywords ("Natural Language Processing" OR "Text Classification") AND (Music OR Song OR Lyric OR Voice). After removing 1,354 duplicates and irrelevant entries, 843 records proceeded to the screening phase, where titles, abstracts, and keywords were examined for domain relevance to Audio and Music AI. Subsequently, 474 records were excluded for not meeting thematic criteria. The remaining 369 high-quality Q1-Q2 journal articles met all inclusion and eligibility standards and were incorporated into the final systematic review dataset. In this study, “Q1-Q2” refers to journals ranked in Quartiles 1 and 2 according to the SCImago Journal Rank (SJR) classification, as accessed and verified in 2025.

- METHODS

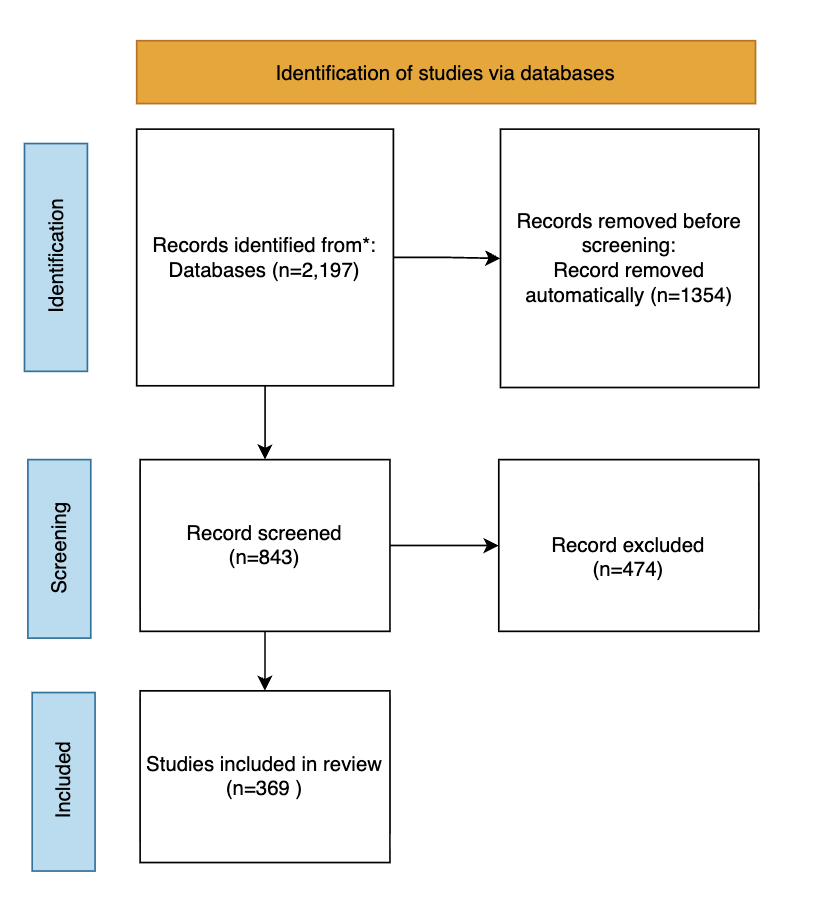

To enhance methodological clarity and provide a visual overview of the study selection procedure, a structured flowchart is presented below. This diagram summarizes the sequential stages of the Systematic Literature Review (SLR), beginning with identification and proceeding through screening, eligibility assessment, and final inclusion. The flowchart complements the PRISMA-based documentation by illustrating the logical progression of filtering and validation steps applied to construct the final dataset. Figure 2 Methodological Flow of the Systematic Literature Review (SLR) Based on PRISMA 2020. The diagram illustrates the sequential stages of identification, screening, eligibility assessment, and inclusion applied in constructing the final dataset.

Figure 2. Methodological Flow of the Systematic Literature Review (SLR) Based on PRISMA 2020

- Identification

The identification stage was conducted using the Scopus database due to its structured indexing system and comprehensive coverage of peer-reviewed publications in Computer Science, Engineering, and Artificial Intelligence. The search strategy was designed to retrieve studies related to Audio and Music Artificial Intelligence (AI), including intersections with Natural Language Processing and multimodal systems. The search covered publications between 2021 and 2025 and yielded 2,197 records. All retrieved records were exported into a structured database to facilitate systematic screening and transparent documentation of selection decisions. The complete search string and retrieval parameters are reported to ensure transparency and reproducibility.

- Screening

During the screening phase, titles and abstracts of the 2,197 records were examined to assess alignment with the conceptual and technical scope of Audio and Music AI. Predetermined inclusion criteria (C1-C3) and exclusion criteria (R1-R5) were applied consistently. Studies were retained if they addressed audio, music, or multimodal systems involving sound, employed AI-based computational methodologies, and reported empirical or quantitative findings. Terminologies such as music generation [73], audio classification [74], lyric-based modeling, transformer architectures, and multimodal learning were considered indicators of thematic relevance. Articles were excluded if they were not written in English, lacked full-text availability, did not employ AI-driven approaches, or were purely conceptual without empirical validation. Following this stage, 843 records demonstrated sufficient thematic and methodological alignment to proceed to full-text eligibility assessment.

- Eligibility Assessment

The eligibility stage involved a comprehensive full-text assessment of the 843 studies retained after title and abstract screening. Each article was examined systematically to ensure methodological rigor, empirical validity, and substantive alignment with the scope of Audio and Music Artificial Intelligence (AI). The evaluation focused on research design transparency, dataset specification, clarity of computational methodology, and the presence of reproducible experimental procedures. Particular attention was given to whether the study explicitly described its model architecture, data sources, evaluation metrics, and reported quantitative results. Studies were excluded if they lacked empirical validation, failed to provide sufficient methodological detail, did not clearly report evaluation protocols, or were not directly situated within audio, music, or multimodal sound-based systems involving AI-based approaches. Conceptual discussions without experimental implementation were also excluded at this stage. Through this structured full-text evaluation, the dataset was refined to 369 journal articles that met all predefined inclusion criteria and satisfied the methodological standards established for this review. These retained studies form the validated dataset for subsequent data extraction, comparative analysis, and longitudinal synthesis. Data management and screening documentation were conducted using Microsoft Excel to ensure traceability of inclusion and exclusion decisions.

- Inclusion and Exclusion

The final dataset consists of 369 journal articles indexed in Scopus and published between 2021 and 2025. The designation Q1-Q2 refers to journals ranked in Quartiles 1 and 2 according to the SCImago Journal Rank (SJR), as accessed in 2025. This quartile classification was applied as a predefined quality criterion during study selection. All inclusion and exclusion decisions were systematically documented in the PRISMA flow diagram, which records the progression of records from identification through screening and eligibility to final inclusion. This documentation ensures transparency of the selection process and traceability of decision-making at each stage. The resulting dataset constitutes the validated empirical foundation for the subsequent data extraction, structured analysis, and longitudinal synthesis conducted under the Domain, Modality, Technique, and Task (D-M-T-T) framework.

- RESULT AND DISCUSSION

- Quantitative Results: Descriptive Overview of the Dataset

This section presents the quantitative structure of the final corpus using the Domain-Modality-Technique-Task (D-M-T-T) framework as an analytical lens. The distribution of the 369 included studies is examined across five dimensions: publication trends over time (2021–2025), domain composition, modality representation, technique adoption, and task classification. Together, these descriptive statistics establish the structural configuration of the dataset prior to the qualitative synthesis of evolutionary and conceptual developments.

- Publication Distribution (2021-2025)

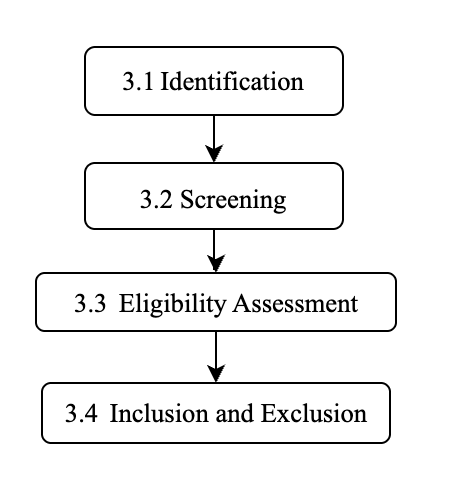

This section presents the quantitative distribution of studies included in the final dataset. A total of 369 peer-reviewed publications published between 2021 and 2025 were analyzed to establish the structural composition of the corpus. The annual and quartile-based distribution of these studies is summarized in Figure 3. Figure 3 depicts the annual distribution of Q1- and Q2-indexed publications included in the final dataset for the period 2021-2025. The corpus comprises 369 peer-reviewed studies, with annual totals of 42 (2021), 52 (2022), 76 (2023), 91 (2024), and 108 (2025), indicating a progressive increase across the review window. Of the total corpus, 230 studies (62.3%) were published in Q1 journals and 139 (37.7%) in Q2 journals. The combined output of 2024 and 2025 accounts for 199 publications (54.0% of the dataset), representing the highest concentration within the five-year span. These quantitative distributions establish the structural composition of the corpus and frame the subsequent domain-, task-, and architecture-level analyses.

Figure

Figure 3. Annual Distribution of Q1 and Q2 Publications (2021-2025)

- Domain Distribution

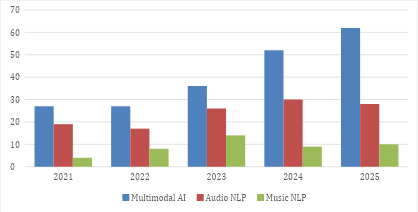

This subsection reports the distribution of the 369 included studies across the primary research domains. Each publication was classified according to its dominant disciplinary focus under the Domain-Modality-Technique-Task (D-M-T-T) framework. The resulting domain-level composition provides a structural overview of the research landscape prior to modality-, technique-, and task-level analyses. Figure 4 presents the annual distribution of the 369 included studies across the three primary research domains: Multimodal AI, Audio NLP, and Music NLP. Multimodal AI constitutes the largest share of the corpus with 204 studies (55.3%), followed by Audio NLP with 120 studies (32.5%) and Music NLP with 45 studies (12.2%). At the annual level, Multimodal AI increased from 27 studies in 2021 to 62 studies in 2025, showing a continuous upward trend. Audio NLP fluctuated between 17 and 30 studies per year, reaching its highest count in 2024 (30 studies). Music NLP represents the smallest proportion across all years, with counts ranging from 4 to 14 studies. These Figure 4 describe the structural distribution of domain-level research within the reviewed period.

Figure 4. Annual Distribution of Studies by Research Domain (2021-2025)

- Modality Distribution

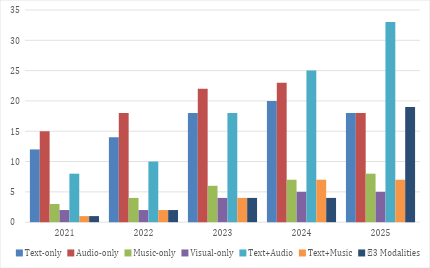

This subsection presents the distribution of modality configurations across the 369 reviewed studies. Each publication was categorized based on the primary data modality or combination of modalities employed in the proposed system, including single-modality and cross-modal configurations. This classification provides a structural overview of how different forms of data representation are utilized within the reviewed research landscape prior to further analytical interpretation. Figure 5 presents the annual distribution of modality configurations across the 369 reviewed studies published between 2021 and 2025. Overall, single-modality research remains substantial throughout the period [75][76], particularly in Audio-only (n = 96) and Text-only (n = 82) studies. However, a clear structural shift emerges over time. In the early phase (2021-2022), the literature is primarily characterized by unimodal designs [77], with Audio-only (15-18 studies per year) and Text-only (12-14 studies per year) dominating the landscape, while cross-modal integration remains comparatively limited (Text+Audio = 8-10 studies). Beginning in 2023, a noticeable transition occurs, marked by a sharp increase in bimodal configurations, especially Text+Audio (18 studies in 2023; 25 in 2024; 33 in 2025). This trajectory suggests an intensifying convergence between linguistic and acoustic representations. By 2025, the expansion of ≥3 modality studies (n = 19 in 2025; total = 30) further indicates the growing prominence of fully multimodal architectures. Collectively, these patterns reflect a longitudinal evolution from modality-isolated modeling toward integrative and multimodal systems, reinforcing the broader epistemic movement from signal-centered processing to semantically coordinated, cross-modal intelligence.

Figure 5. Annual Distribution of Modality Configurations (2021-2025)

Figure 5. Annual Distribution of Modality Configurations (2021-2025)

- Technique Distribution

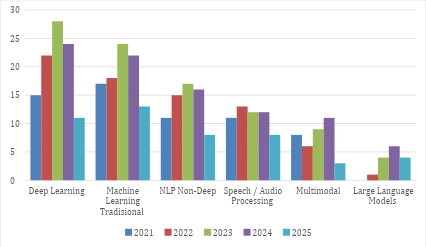

This subsection examines the distribution of computational techniques employed across the 369 selected studies to identify methodological patterns in Audio, Music, and Multimodal AI between 2021 and 2025. Figure 6 presents the longitudinal distribution of computational techniques employed in Audio, Music, and Multimodal AI studies between 2021 and 2025. Across the 369 selected studies, Deep Learning architectures (CNN, LSTM, Transformer) [78][79] constitute the largest category with 100 studies, increasing from 15 in 2021 to a peak of 28 in 2023 before declining to 11 in 2025. This confirms their position as the dominant computational backbone of the field. Traditional Machine Learning methods [80] (SVM, Random Forest, Logistic Regression) [81][82] follow closely with 94 studies, showing relatively stable usage across all five years. This indicates methodological continuity, where classical models coexist alongside deep neural architectures rather than being fully replaced [83][84]. Non-deep NLP techniques (TF-IDF, N-gram, LDA) account for 67 studies [85][86], peaking in 2023 (17), while Speech/Audio Processing methods [87][88] (ASR, MFCC-based pipelines) [89][90] total 56 studies, remaining relatively stable between 11-13 studies during the mid-period. These approaches continue to function as foundational or complementary components within broader modeling frameworks. Multimodal approaches comprise 37 studies, gradually increasing toward 2024 (11 studies) [91][92], reflecting growing cross-modal integration [93]. Large Language Models [94][95] (e.g., GPT, LLaMA) represent the smallest but emerging category with 15 studies, expanding from 0 in 2021 to 6 in 2024, indicating a recent yet notable methodological shift. Overall, the distribution demonstrates a structural transition from feature-centric and task-specific pipelines toward deep, multimodal, and increasingly language-centered computational architectures.

Figure 6. Longitudinal Distribution of Computational Techniques in Audio, Music, and Multimodal AI (2021-2025)

- Task Distribution

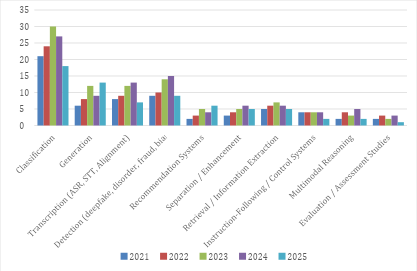

This subsection examines the distribution of research tasks across the 369 selected studies, aiming to identify dominant problem orientations and their evolution within Audio, Music, and Multimodal AI between 2021 and 2025. Figure 7 illustrates the annual distribution of dominant research tasks across the 369 reviewed studies. An analysis of 369 research tasks conducted between 2021 and 2025 indicates that classification remains the dominant problem orientation, accounting for 120 studies (32.5%). This category includes emotion classification [96], sentiment analysis [97][98], genre classification [99][100], speaker identification [101][102], and disease classification [103][104], and consistently represents the primary research focus across all years. The second-largest category is detection, comprising 57 studies (15.4%). This includes deepfake detection [105][106], fraud/phishing detection [107][108], bias detection [109][110], and clinical disorder screening using speech and text [111]. A noticeable increase occurred in 2023-2024, reflecting growing attention to system security and reliability [112]. Transcription tasks [113] (e.g., ASR, speech-to-text, alignment) [114][115], account for 49 studies (13.3%) and remain stable across years, serving as foundational infrastructure for downstream systems [116]. Similarly, generation tasks [117] total 48 studies (13.0%), covering text generation [118][119], music generation [120][121], text-to-speech synthesis [122], and dialogue generation [123][124] with growth particularly evident in 2024-2025. Other categories appear in smaller but meaningful proportions: retrieval / information extraction [125][126] (29 studies; 7.9%), separation / enhancement [127] (23 studies; 6.2%), and recommendation systems [128] (20 studies; 5.4%). Additionally, instruction-following / control systems account for 18 studies (4.9%) [129], and multimodal reasoning represents 16 studies (4.3%) [130], both showing gradual growth in 2023-2024. Studies focused on evaluation / assessment remain limited, with 11 studies (3.0%). Overall, the 2021-2025 research landscape is characterized by the dominance of classification tasks (32.5%), followed by detection (15.4%), transcription (13.3%), and generation (13.0%), with a gradual shift toward generative and multimodal systems in recent years.

Figure 7. Longitudinal Task Distribution Across Audio, Music, and Multimodal AI Domains (2021-2025)

- Comparative Positioning with Prior Reviews

This subsection compares the present review with previous systematic literature reviews published between 2021 and 2025. The aim is to clarify how this study differs in scope, structure, and analytical focus. While prior reviews often concentrate on specific tasks or domains within audio or music research, the present study provides a broader longitudinal synthesis across Audio NLP, Music NLP, and Multimodal AI using the D-M-T-T framework. Table 1 presents a comparative positioning of prior systematic literature reviews published between 2021 and 2025 in relation to the present study, with particular attention to differences in analytical scope and reported findings. Earlier reviews tend to concentrate on specific tasks or subdomains. For example, Lozano [131] identified context-aware recommender systems as predominantly driven by feature engineering and metadata-based personalization strategies. In contrast, our longitudinal analysis (2021-2025) reveals a progressive shift toward multimodal embedding alignment and transformer-based architectures that integrate audio [135], lyrics [136], and contextual signals within unified semantic spaces. Similarly, Barnett [132] examined generative audio models primarily from an ethical and governance perspective, highlighting concerns related to bias, misuse, and accountability. While these normative insights are essential, the study does not provide structural analysis of architectural evolution. Our findings extend this perspective by demonstrating a measurable increase in language-conditioned generative models and multimodal transformer frameworks between 2023 and 2025, indicating a broader epistemic transition beyond ethical discourse alone. Mohammad [133] reported continued dominance of recognition-oriented sound event detection pipelines. However, our cross-domain synthesis indicates that recognition tasks increasingly coexist with generative and multimodal reasoning tasks, suggesting diversification rather than persistence of a single paradigm. More recently, Budi Putra [134] reviewed music information retrieval techniques for genre classification and confirmed the sustained importance of classification-based pipelines in MIR [137]. In contrast, our analysis shows that classification, while still prevalent, is gradually complemented by generation, retrieval, and instruction-following tasks, reflecting functional expansion across the Audio-Music-Multimodal ecosystem. Overall, unlike prior reviews that remain task-specific, domain-specific, or thematically bounded, the present study adopts a broader and longitudinal perspective spanning 2021-2025. By integrating Audio NLP, Music NLP, and Multimodal AI under the unified Domain-Modality-Technique-Task (D-M-T-T) framework, this review provides a structured mapping of architectural evolution, task diversification, and semantic convergence trends. Methodologically, the analysis is grounded in Scopus-indexed, English-language Q1-Q2 publications, ensuring rigor while maintaining transparency in scope definition.

Table 1. Comparative Positioning with Prior Systematic Literature Reviews (2021-2025)

Author | Scope | Time | Coverage | Methodological Boundary |

Lozano [131] | Music recommender | Pre-2021 | Task-specific | Limited to recommendation systems |

Barnett [132] | Generative audio ethics | Pre-2023 | Ethics-focused | No structural AI taxonomy |

Mohammad [133] | Sound event detection | Pre-2024 | Detection-focused | Recognition pipelines only |

Budi Putra [134] | MIR genre classification | 2025 | Domain-specific | No multimodal integration |

Present Study | Audio-Music-Multimodal AI | 2021-2025 | Cross-domain & longitudinal | Scopus-indexed; English-only; Q1-Q2 filtering |

- Qualitative Discussion

- Main Findings

- Paradigm Shift in Research (2021-2025)

Between 2021 and 2025, research in Audio and Music Artificial Intelligence underwent a significant paradigm shift from signal-centered recognition systems toward language-mediated semantic modeling [81] and generative intelligence [138]. In the early phase, most studies focused on acoustic feature extraction [139] and classification tasks [102], emphasizing phonetic accuracy [140], emotion detection [141], and genre recognition [142] through conventional convolutional [143] and recurrent architectures [144]. Sound was primarily treated as a physical signal requiring precise representation and decoding [116]. Over time, however, advances in self-supervised learning [145] and Transformer-based architectures enabled models to move beyond surface-level recognition toward contextual understanding [146] and meaning construction [147]. Language progressively emerged as a central representational layer, mediating the relationship between acoustic perception and semantic interpretation [148]. Rather than merely transcribing or classifying audio signals [149], systems increasingly aimed to interpret intent, affect, and contextual meaning embedded within sound. By the later stages of the reviewed period, generative and multimodal frameworks became prominent, transforming audio and music AI into ecosystems capable not only of recognition but also of semantic synthesis and co-creative generation. This evolution reflects an epistemic transition in which sound is no longer treated solely as a signal to be decoded, but as a linguistic and affective construct situated within a broader multimodal semantic space.

- Evolution of Domains and Modalities

The development of Artificial Intelligence (AI) research in the fields of audio [150] and music shows that technical progress is determined not only by advances in learning models but also by how domains and modalities are defined and integrated. The domain represents a disciplinary focus, such as Audio AI [151][152], which emphasizes sound signal processing [153][154]; Music AI [155][156], which highlights musical structure and affective expression [157][158]; and Multimodal AI [159][160], which combines multiple forms of data to produce unified meaning. Meanwhile, modality refers to the form of data representation text, audio, image, or other signals that serves as the foundation for perception, representation, and generation in AI systems. Within the context of research from 2021 to 2025, the relationship between domain and modality has become increasingly interwoven, reflecting a paradigm shift from single modality processing [161][162] to language mediated multimodal systems [163][164]. Accordingly, this section discusses the evolution of domain concepts and the role of modality as a semantic connector [165], enabling AI systems to understand, interpret, and generate meaning across different forms of representation.

Table 2 presents the evolution of domains and modalities in Audio, Music, and Multimodal AI research between 2021 and 2025. Overall, the developmental trend indicates a transition from acoustic signal processing to language-mediated semantic understanding [166]. In the early phase, Audio AI and Music AI focused on signal recognition and emotion analysis [48][167], while Multimodal AI primarily linked text and audio descriptively [168]. Over time, all three evolved toward contextual and generative systems [169], with language functioning as the semantic bridge across modalities. By 2025, the integration of six modalities text, audio, music, visual, emotion, and memory positions language as the central mechanism for cross-domain meaning construction.

Table 2 presents a qualitative overview of the evolution of domains and modalities in Audio, Music, and Multimodal AI research from 2021 to 2025. Between 2021 and 2025, research in Audio and Music AI moved from acoustic signal recognition to systems capable of interpreting meaning, context, and emotion in sound [187]. Language became the semantic connective tissue [188], linking text, sound, music, and vision within shared representational spaces [189][190]. This development unfolded across Audio AI, Music AI, and Multimodal AI, which gradually converged toward cross-modal semantic representation.

In AudioNLP, early work centered on acoustic features such as MFCC [191], spectrograms [192], and mel filterbanks, using CNN [193][194] and RNN architectures [195][196] for ASR [197][198] and SER [199][200], focusing mainly on signal recognition [201]. The introduction of self-supervised models such as Wav2Vec2 [202] and HuBERT enabled systems to learn linguistic representations from raw speech, connecting acoustic signals to speaker intent [203][204]. This shift supported tasks such as intent detection [205] and emotion-aware modeling. Later, deeper NLP integration appeared through BLSTM-CRF [206][207], Transformer-based semantic parsing [208], and SpeechSQLNet [161], enabling speech-to-SQL translation. Applications expanded to education [209]-[211] and healthcare [212][213] via systems such as SAIL [214] and DAX™ [215][216]. Recent models, including multilingual ASR [217][218] and SSL-based EmoSDS [219], support adaptive and emotionally responsive dialogue. Overall, audio is no longer treated only as a signal, but as a linguistic expression carrying meaning.

In Music AI, research progressed from signal-based analysis [220] to linguistic and semantic modeling [221]. Early studies linked lyrics [222], emotion [223][224], and musical features such as tempo and tonality [225], using Word2Vec and FastText embeddings [226]-[228]. Later, models such as BiLSTM [229][230] and BERT [231][232] enabled lyric music alignment and text-conditioned emotion generation. The field then entered a generative phase with architectures such as MuseBERT, MusicLM, and MusicCaps-CLAP [233], which translated text into coherent musical output. Datasets such as MusicCaps and GTZAN-Fusion [46][234] strengthened semantic alignment, while cross-lingual studies expanded cultural understanding [235]. Recent contrastive frameworks unified text [236], mood [237], and melody [238], and models such as Jukebox-XL and Udio T5 generated music aligned with linguistic prompts [239], supporting applications in therapy and education [240][241].

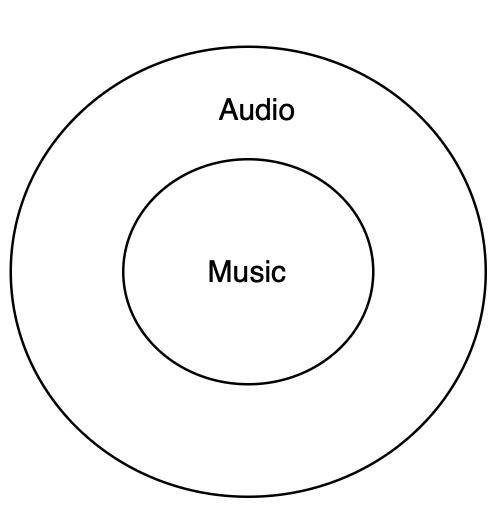

Figure 8 illustrates the ontological and epistemic relationship between the two principal research domains Audio AI and Music AI. Based on the synthesis of studies from 2021 to 2025, Audio AI primarily concerns the processing and perception of acoustic signals, whereas Music AI is oriented toward the construction of semantic meaning and affective expression derived from sound. Consequently, from an epistemic standpoint, Music AI is positioned as a subset of Audio AI, representing a higher level of understanding in which sound is not merely recognized but also interpreted linguistically and emotionally.

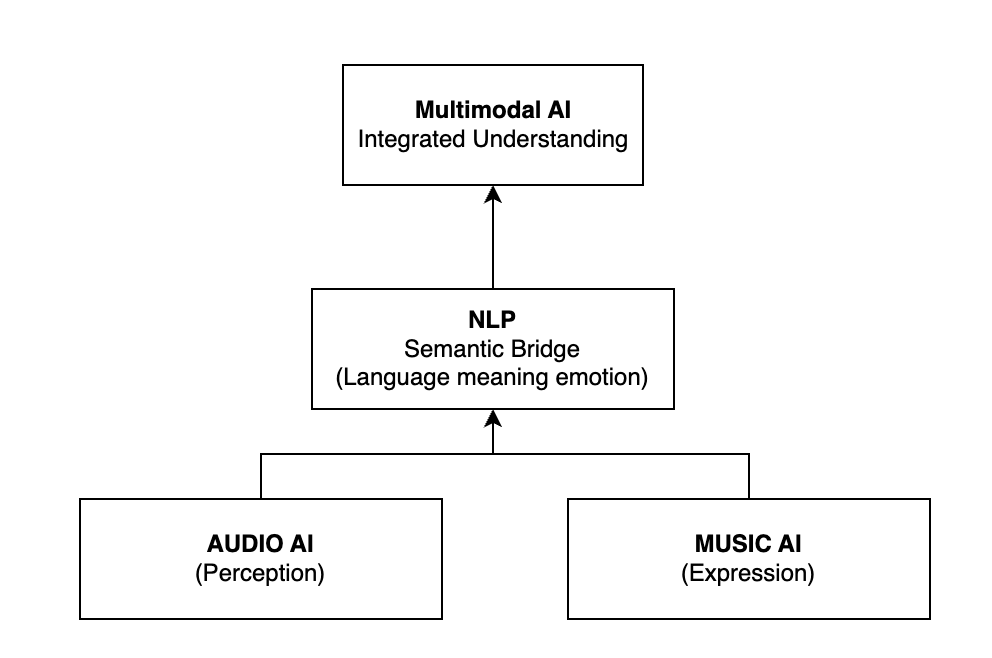

Figure 9 underscores the position of Natural Language Processing (NLP) as the semantic axis connecting perception in Audio AI [242][243] and expression in Music AI [244][245], ultimately converging toward integrated understanding within Multimodal AI [246][247]. NLP functions not merely as a linguistic tool but as an epistemic mechanism that translates sound into meaning and meaning into emotional expression [248][249]. The evolution of Audio, Music, and Multimodal AI research between 2021 and 2025 reveals that the primary role of NLP has shifted from a purely linguistic component to a semantic core that interlinks perception, expression, and cross modal understanding [250][251]. Audio AI represents the perceptual dimension, focusing on the recognition [252][253] and interpretation of acoustic signals, whereas Music AI operates within the expressive dimension [254][255], constructing emotional [256][257] and semantic structures from sound [258][259]. Situated between these layers, NLP serves as an epistemic bridge, connecting language, meaning, and affect, thereby allowing AI systems to convert acoustic perception into meaningful musical expression. Collectively, these three domains illustrate an epistemic shift from signal level processing to cross modal meaning representation. Despite significant progress, cross domain research continues to face challenges in maintaining semantic representation stability, achieving affective alignment across modalities, and developing cross cultural benchmarks capable of evaluating semantic coherence comprehensively.

Table 2. Qualitative Overview of Domains and Modalities (2021-2025)

Year | Audio AI | Music AI | Multimodal AI |

2021 | Signal & prosody recognition [170][171]; CNN/RNN architectures [172][173]; ASR, SER | Lyric emotion analysis; Word2Vec/FastText embeddings s [174] | Dual-modality (Text Audio); AudioCLIP, AudioCaps |

2022 | Linguistic representation learning [175]; SSL (Wav2Vec2, HuBERT) [176][92]; semantic abstraction [177] | Lyric emotion mapping [178]; mood tagging; text acoustic correspondence | Tri-modality (Text Audio Music) [179]; genre [180] & mood recognition |

2023 | Transformer-based semantic alignment  [171]; prosody meaning integration [171]; prosody meaning integration | Text-conditioned generation; BERT, BiLSTM [181][182]. | Quad-modality (+Emotion); CLAP, MusicLM |

2024 | NLP acoustic convergence [94]; hybrid systems (SpeechSQLNet, MAM-BERT)  [183] [183] | Semantic affective generative phase; MusicLM, MuseBERT, [184] CLAP | Penta-modality (Visual, Social); VOICE, CAMFF |

2025 | Cognitive & adaptive dialogue; EmoSDS, Vox Calculi | Dynamic linguistic control; Udio-T5, Jukebox-XL | Hexa-modality (+Memory); VISION [185], V2M [186] |

Figure 8. Ontological and Epistemic Relation between Audio AI and Music AI

Figure 9. The Role of NLP as a Semantic Bridge among Audio AI, Music AI, and Multimodal AI

- Evolution of Task Classification and Applications in Audio & Music AI

The classification of tasks and applications in Audio and Music AI highlights the rapid evolution that occurred between 2021 and 2025. Through diverse tasks such as speech recognition [260][261], lyric analysis, and cross modal integration, the interaction between audio, music, and language processing has become the fundamental basis for developing intelligent systems capable of comprehending, interpreting, and creating contextual meaning. Table 3 illustrates the evolutionary trajectory across the three primary domains Audio AI, Music AI, and Cross-Modal AI from 2021 to 2025. The transition moves from signal driven perceptual systems to cross modal meaning generation. In the early years, conventional models such as CNN and LSTM dominated recognition and classification tasks in both speech processing [262][263] and lyric analysis. With advancing technology, self-supervised models like Wav2Vec2 and HuBERT enabled the emergence of linguistic representations without manual annotation. Throughout this evolution, language has served as the semantic bridge uniting acoustic perception, emotional expression, and symbolic representation signifying a paradigm shift from signal recognition toward semantic affective cognition [264], which now forms the epistemic foundation of modern Audio and Music AI.

Table 3. Qualitative Analysis of the Evolution of Domains, Tasks, Models, Applications, and Research Directions (2021-2025)

Year | Domain & Main Focus | Representative Tasks | Dominant Models / Architectures | Applications & Implementation Context | Research Direction & Emerging Trends |

2021 | Audio AI Acoustic Signal Level Recognition | ASR [265], KWS [266], SER [267] | CNN [268], RNN [269], CRNN [270] | Speech recognition [267], voice command [271], emotion tagging [272] | Transition from signal recognition to early linguistic mapping |

| Music AI Early Linguistic Analysis of Lyrics | Lyric classification [273], lyric emotion recognition | LSTM [274], BERT Lyric [275], CNN Attention [276] | Affective lyric analysis and music emotion classification | Strengthening correlations between text and musical expression |

| Cross Modal AI Early Text Audio Integration | Text to-audio retrieval, audio captioning [277] | AudioCLIP, WavCaps (dual encoder) | Text based sound description, sound retrieval | Early semantic mapping between text and sound |

2022 | Audio AI Self Supervised Representation Learning | Multilingual ASR, Contextual KWS [278] | Wav2Vec2 [279], HuBERT [280] | Multilingual ASR [281], intent recognition [282] | Label free learning and cross lingual fine tuning |

| Music AI Strengthening Text Music Semantic Relations | Lyric mood correlation [283], emotion tagging [284] | BiLSTM, FastText, Word2Vec | Mood analysis and semantic correlation in music [162] | Expansion of affective datasets and text music alignment |

| Cross Modal AI Tri Modal Integration (Text Audio Music) | Text music alignment, semantic tagging | MusicCLIP, AudioSet Music Subset | Text music annotation [164], genre and mood tagging | Formation of joint representational space across modalities |

2023 | Audio AI Semantic Alignment and Contextual Understanding | SER, Speech Intent Recognition | Transformer, WavLM, SSL EmoSDS | Conversational AI, emotional voice assistant [285] | Semantic integration across tasks and domains |

| Music AI Semantic Alignment Between Lyrics and Music | Lyric to music generation, affective mapping [286] | Transformer, Contrastive Learning [287] | Text based melody generation, affective synthesis | Semantic synchronization between words, rhythm, and harmony [239] |

| Cross Modal AI Contrastive Pretraining and Generation | Text to audio/music generation | CLAP, AudioLDM, MusicLM | Text to sound and text to-music generation | Convergence of text conditioned generation |

2024 | Audio AI Domain Expansion and Prosody Modeling | Emotion aware TTS [288] , SpeechSQL | Stack Transformer [289], BLSTM-CRF, MAM BERT | Healthcare [290]-[292], education [293][294], industrial command systems [295][296] | Full integration between signal and semantic representation |

| Music AI Generative Linguistic Musical Systems | Text conditioned music generation, lyric aware composition | MusicLM, MuseNet Lyric, MelodyGPT [297] | Generative music based on linguistic narrative | Consolidation of semantic affective modeling |

| Cross Modal AI Context Aware Multimodal Fusion | Emotion recognition, instruction following | RCFANN BLSO, CAMFF, VOICE | Interactive multimodal dialogue, visual audio reasoning | Integration of emotional and visual context in multimodal fusion |

2025 | Audio AI Unified Semantic Representation | SpeechSQLNet 2.0, Adaptive Dialogue | CNN GRU hybrid, instruction-tuned LLMs | Voice reasoning, cognitive query translation | Semantic generalization and architectural efficiency |

| Music AI Co Creative Generative Systems | Lyric conditioned composition, semantic affective generation | Udio T5, LyricFusionNet 2, Jukebox XL | Adaptive generative music and AI based music therapy | Aesthetic cognition and language emotion integration |

| Cross Modal AI Integrated Multimodal Intelligence | Cross modal composition, multimodal narrative synthesis [286] | V2M, VISION, Memory Augmented Transformer | Video to music generation, contextual sentiment analysis [298][299] | Integrated processing based on cross domain semantic context [300] |

- Technical Evolution

The technical evolution of Audio and Music AI between 2021 and 2025 reflects a fundamental shift from feature based signal processing toward language mediated semantic and generative representation learning. In the early phase, research primarily focused on acoustic feature extraction [301][302] and emotion pattern recognition using conventional architectures such as CNN [303],[122],[173] and LSTM [304][305]. This progression demonstrates a growing convergence among Audio AI, Music AI, and cross domain systems, where language functions as the central mechanism linking perception, representation, and expression within artificial intelligence cognition.

Table 4 presents the evolution of techniques and methodological approaches across Audio, Music, and Multimodal AI from 2021 to 2025. Overall, the trajectory moves from feature driven signal processing toward language-mediated semantic representation learning. Audio AI has evolved from acoustic recognition to Transformer [306] and SSL based linguistic comprehension systems. Music AI has transitioned from statistical modeling to generative frameworks that employ NLP to control emotional expression and musical structure. Meanwhile, Multimodal AI expands the semantic space by integrating text, sound, music, vision, and emotion within a unified representational framework. Collectively, the integration of language emerges as the key transformative factor, enabling systems not only to recognize sounds and melodies but also to interpret, generate, and construct meaning across domains.

Table 4. Evolution of Linguistic Roles in Audio, Music, and Multimodal AI (2021-2025)

Year | Domain | Dominant Approach | Key Models | Role of Language | Core Implication |

2021 | Audio AI | Signal level processing | CNN, RNN, CRNN | Absent; phonetic focus | Pre-semantic acoustic recognition [307] |

| Music AI | Feature based emotion mapping | Seq2Seq, CNN LSTM [190][308] | Lyrics as labels | Music treated as numerical patterns |

| Multimodal | Contrastive dual encoders | AudioCLIP, WavCaps | Captioning | Initial text–audio alignment |

2022 | Audio AI | Self-supervised learning | Wav2Vec2, HuBERT | Emerging mediator | Label-free phonetic semantics |

| Music AI | Lyric-informed affect modeling | BiLSTM, CNN [309]-[311] | Emotion tagging | Early musical semantics |

| Multimodal | Tri-modal fusion | MusicCLIP | Semantic binder | Linguistic musical unification |

2023 | Audio AI | Language-conditioned Transformers | Whisper, WavLM [312] | Parsing & decoding | Toward semantic voice understanding |

| Music AI | Deep semantic representations | MusicBERT, Lyric2Vec | Meaning interpreter | Language shapes musical meaning |

| Multimodal | Large-scale contrastive pretraining | CLAP, MusicLM | Generative controller | Text conditioned generation |

2024 | Audio AI | Context aware fine tuning | SpeechSQLNet, WavLM | Logical structure | Reasoning over spoken meaning |

| Music AI | Prompt driven generation | MuseBERT, MusicLM | Semantic controller | Language governs music structure |

| Multimodal | Attentive multimodal fusion | VOICE [313] | Interaction interface | Language mediates human AI context [314] |

2025 | Audio AI | Instruction tuned SSL | SpeechSQLNet 2.0 | Reasoning module | Autonomous meaning understanding [315][316] |

| Music AI | Generative diffusion | Udio-T5, Jukebox-XL | Central controller | Linguistic co-creation |

| Multimodal | Memory augmented Transformers | V2M, VOICE | Semantic bridge | Language as epistemic core |

- Representative Datasets and Models

This section examines the evolution of representative datasets and models underlying Audio and Music AI from 2021 to 2025, highlighting a shift from signal-based corpora and convolutional architectures toward multimodal datasets and Transformer-based and Large Multimodal Models capable of unified semantic representation. As summarized in Table 5 early datasets relied on single-signal phonetic annotations such as LibriSpeech [317] and MoodyLyrics4Q [318]. From 2022 onward, paired text audio resources including AudioCaps and MusicCaps enabled explicit semantic alignment between sound and language. The 2023 2024 period saw rapid expansion into large-scale multimodal datasets, notably WavCaps and the CLAP Corpus, which integrated visual and affective dimensions for contrastive and generative learning. By 2025, hyper multimodal and co creative datasets such as LyricFusion PromptBank [319] and V2M emerged to support context-aware generation with Large Multimodal Language Models (LMLMs), underscoring the transformation of datasets from passive signal repositories into active semantic spaces where language, sound, and emotion jointly shape meaning-centered artificial intelligence.

In Audio AI, dataset evolution reflects a shift from phonetic annotation corpora toward multimodal resources enriched with linguistic and affective meaning. Early datasets such as LibriSpeech [307], CommonVoice [58], TED-LIUM3[330], and the AMI Meeting Corpus emphasized precise transcription and underpinned models like Wav2Vec2 [279] and HuBERT. From 2022, semantic oriented datasets including AudioCaps, Clotho v2, and AudioSet expanded analysis from speech recognition to meaning representation through paired audio text descriptions. Large multilingual corpora such as VoxCeleb2, MLS, and VoxPopuli further enabled cross-lingual learning in 2023 [331], followed by task- and context-specific datasets in 2024, including SpeechSQLNet, SAIL, and MAM-BERT. By 2025, datasets such as the Smart Voice Assistant Corpus and Clinical Tele Follow Up Corpus integrated signal, text, and affect, explicitly linking prosody, emotion, and cognition [332][333]. Marking a transition from signal-level annotation to semantic alignment.

Table 5. Qualitative Analysis of Dataset Evolution in Audio, Music, and Multimodal Domains (2021-2025)

Year | Domain & Dataset Characteristics | Example Datasets | Primary Functions & Applications | Research Direction & Emerging Trends |

2021 | Audio AI | LibriSpeech, CommonVoice, TED LIUM3, AMI | Supervised ASR and SER training through alignment | Focus on phonetic accuracy and basic linguistic labeling |

| Music AI | MoodyLyrics4Q, LyricFind, MetroLyrics | Lyric emotion classification, genre tagging [320] | Early exploration of correlations between text and affective expression |

| Multimodal AI | ESC 50, UrbanSound8K, GTZAN | Sound/music classification | Reliance on spectral features for signal based detection |

2022 | Audio AI | AudioCaps, Clotho v2, AudioSet | Audio captioning, text grounded tagging | Shift toward text grounded semantic learning |

| Music AI | MELD Lyric, MIREX LyricEmotion | Lyric melody alignment, emotion-aware generation | Strengthening cross modal representation learning |

| Multimodal AI | MusicCLIP subset | Text music annotation and tagging | Formation of joint representational spaces |

2023 | Audio AI | VoxCeleb2, MLS, VoxPopuli | Cross-lingual ASR and SER [321] | Advancements in cross lingual and transfer learning [111] |

| Music AI | MusicCaps, MELD Lyric, MIREX Emotion | Lyric to music generation, semantic alignment | Enhancement of semantic embedding alignment |

| Multimodal AI | WavCaps, LAION Audio | Text audio retrieval and generation | Scaling up toward universal semantic representation |

2024 | Audio AI Task | SpeechQL, SAIL, MAM BERT | Speech to SQL, morphology aware TTS, voice-based health AI [322]-[324] | Expansion into semantic prosodic contextualization |

| Music AI | LyricSet-XL, MuseData Fusion, PolyglotLyrics | Text to music generation, lyric aware composition | Growth of prompt-based, multicultural datasets |

| Multimodal AI | CLAP Corpus, AudioCLIP, IEMOCAP | Emotion recognition, multimodal captioning [325] | Fusion of visual, acoustic, and emotional features |

2025 | Audio AI | Smart Voice Assistant Corpus, Clinical Follow Up [326][327] | Elderly dialogue modeling, affective response detection [328] | Semantic emotional interpretation and memory modeling |

| Music AI | LyricFusion PromptBank, Udio Creative Pairs | LLM-driven music generation, lyric therapy [329] | Human AI co creation guided by aesthetic preference |

| Multimodal AI | V2M, VOICE, VISION | Video to music generation, multimodal reasoning | Training of Large Multimodal Language Models (LMLMs) |

- Architecture and Computational Mechanisms

This section examines the evolution of architectures and computational mechanisms in Audio and Music AI between 2021 and 2025, highlighting a shift from signal based models toward language- and semantics driven systems within multimodal frameworks. Through the convergence of deep neural networks [293][294], Transformer based architectures, and diffusion generative models, AI systems have progressed beyond sound and structure recognition toward semantic understanding, reasoning, and generation mediated by linguistic and affective representations. As summarized in Table 6, early architectures were dominated by CNN RNN models [334] emphasizing acoustic feature extraction [335][336] and basic classification. Since 2022, self supervised and contrastive learning approaches have enabled explicit alignment between sound and text representations [337], narrowing the gap between perception and semantics. The 2023-2024 period marked the integration of Transformers, Large Language Models (LLMs) [338][339] and diffusion architectures to establish shared cross-modal representational spaces. By 2025, architectures evolved into language conditioned systems combining neural computation with symbolic reasoning [340][341], positioning language as the central semantic controller for adaptive and affectively coherent audio and music generation.

Table 6. Representative Models and Architectures in Audio, Music, and Multimodal AI (2021-2025)

Year | Domain | Architectural Paradigm | Representative Models | Core Advances | Research Outcomes |

2021 | Audio AI | Signal-based CNN RNN | CNN, CRNN, DeepSpeech 2, PANNs | MFCC/spectrogram feature learning | Phonetic accuracy; no semantic modeling |

| Music AI | Sequential statistical models | CNN, LSTM, MFCC and Word2Vec | Basic lyric melody embedding | Early linguistic acoustic alignment |

| Multimodal AI | Monomodal encoders | PANNs, Musicnn, Wav2Vec2 | Signal to label learning | Isolated domains without cross-modal semantics |

2022 | Audio AI | Self supervised Transformers | Wav2Vec2, HuBERT | Masked prediction; lower WER | Shift toward semantic representations |

| Music AI | Transformer enhanced modeling | BERT, CRNN, Attention | Prosody syntax emotion alignment | Context-aware musical semantics |

| Multimodal AI | Dual encoder contrastive learning | CLAP, AudioCLIP | Shared latent spaces | Language as semantic mediator |

2023 | Audio AI | Unified encoder decoder | SpeechT5, CLAP | Multi task semantic embedding | Integrated recognition and generation |

| Music AI | Generative multimodal models | MusicBERT, MuLan, Lyric2Vec | Text conditioned melody generation | Music as linguistic expression |

| Multimodal AI | Text conditioned Transformers | MusicLM, Riffusion | Language driven waveform synthesis | Language controls generation |

2024 | Audio AI | Task oriented semantic modeling | SpeechSQLNet, MAM-BERT | Semantic prosodic integration | ASR/TTS linked to meaning |

| Music AI | LLM diffusion pipelines | MusicGen, AudioLDM, T5 | Cross cultural text to-music [342] | Linguistic emotion governs music |

| Multimodal AI | Diffusion based alignment | CLAP, MuLan, AudioCLIP | Shared semantic spaces | Text-guided retrieval and remixing |

2025 | Audio AI | Symbolic neural integration | MR NLP, DAX™, Voice VR [343] | Human in the loop reasoning | Explainable systems for health [344] |

| Music AI | Diffusion Transformer hybrids | Udio T5, Jukebox-XL | Instruction tuning & RLHF [345][346] | Co creative music with latent language |

| Multimodal AI | Language-conditioned multistream | AudioLM, MusicLM v2, LoRA | Unified text audio music pipelines | Language as cognitive interface |

The evolution of Audio AI architectures between 2021 and 2025 reflects a shift from signal based perceptual systems toward models capable of interpreting linguistic meaning and emotional nuance in sound. Early work relied on CNN, CRNN, and BiLSTM-based architectures [347], such as DeepSpeech 2 and PANNs, which processed MFCC [348] and spectrogram features for Automatic Speech Recognition (ASR) [349][350] and Speech Emotion Recognition (SER) [351]. A paradigmatic transition emerged in 2022 with self-supervised pretraining, redirecting Audio AI from pattern recognition to representation learning [352]. Models such as Wav2Vec 2.0 and HuBERT learned phonetic and linguistic structures directly from raw waveforms via masked-prediction mechanisms [353], improving cross-lingual generalization and reducing Word Error Rate (WER) by up to 20 %. Concurrently, Transformer architectures introduced attention-based alignment for speech translation and emotion recognition tasks [354]. By 2023, SpeechT5 proposed a multi-task encoder decoder Transformer [355] that unified ASR, Text-to Speech (TTS), and speaker identification within a shared latent space. In 2024, task-oriented semantic modeling became prominent. SpeechSQLNet enabled direct speech-to-structured-query conversion without an intermediate ASR stage by jointly modeling phonetic and syntactic features [356]. In TTS [357], while Stack Transformer architectures increased semantic parsing accuracy by up to 12 %. By 2025, Modified Rule-based NLP (MR NLP) framework combined linguistic rules with human-in-the-loop refinement to support ethically contextualized analysis of elderly speech in Smart Voice Assistant systems [358]. Applications such as DAX™ achieved time efficiency gains of up to 30 % in automated medical documentation, while SVM-based voice biomarker detection for depression reached an AUC of 0.93 [359]-[361]. Extensions including the Voice VR Navigation Model and the GAD-7 Alexa Interface further expanded Audio AI into mental health monitoring and immersive interaction. A symbolic neural hybrid phase integrating linguistic rules, affective states, and human context within unified frameworks [362][363]. This progression not only improved technical performance but also expanded the cognitive capacity of Audio AI systems to interpret intent, emotion, and meaning in spoken communication.

Between 2021 and 2025, Music AI architectures evolved from sequential statistical models toward generative systems in which language functions as the primary mediator of musical meaning [364]. Early approaches relied on CNN [365] and LSTM [366] architectures with acoustic and lexical features to support basic lyric melody embedding [367] and emotion classification, enabling initial linguistic acoustic alignment. From 2022 onward, Transformer enhanced models introduced prosody syntax emotion alignment [368], allowing music representations to capture contextual and affective semantics [369]. By 2023, multimodal generative frameworks treated music as a form of linguistic expression through text-conditioned learning. This trajectory culminated in 2024-2025 with diffusion Transformer pipelines that support instruction driven, co-creative music generation [67], positioning Music AI as a semantic and affective extension of NLP rather than a purely acoustic modeling task.

Between 2021 and 2025, Multimodal AI architectures evolved from monomodal encoders toward language conditioned systems that unify audio, music, and text within shared semantic spaces [370]. This evolution culminated in 2024-2025 with diffusion based and multistreaming without crossBmodal awareness. From 2022, dual encoder contrastive architectures enabled joint latent representations, positioning language as a semantic mediator across modalities. By 2023, text-conditioned Transformer-based models supported direct control of audio and music generation through linguistic prompts [371]. This evolution culminated in 2024-2025 with diffusion-based and multistream architectures that integrate text, audio, and music within a single pipeline, establishing language as a cognitive interface that coordinates perception, generation, and cross-modal reasoning.

- The Central Role of NLP as a Semantic Bridge

The findings of this review indicate that the most fundamental transformation in Audio, Music, and Multimodal AI between 2021 and 2025 is the elevation of Natural Language Processing (NLP) from a supporting component to a semantic core across domains. In early systems, language primarily served as a transcription or annotation layer. With the emergence of self-supervised and Transformer-based architectures, however, NLP became the central representational mechanism that mediates acoustic perception, contextual interpretation, and generative synthesis. In Audio AI, NLP links raw speech signals to intent, pragmatics, and affective meaning. In Music AI, language governs emotional and structural composition through text-conditioned modeling [39], positioning linguistic prompts as drivers of musical generation [59]. In Multimodal AI, NLP establishes shared semantic spaces that align text, sound, music, vision, and contextual signals within unified representations. Across domains, language no longer operates as metadata but as the organizing principle through which heterogeneous modalities are interpreted and integrated. This convergence reflects an epistemic transition from signal-level decoding to meaning-centered, multimodal intelligence. The core shift is not merely architectural but conceptual: sound is redefined as a linguistic and affective construct embedded in semantic space. NLP thus functions as the unifying explanatory axis that enables cross-modal reasoning, affective alignment, and co-creative generation in contemporary Audio and Music AI.

- Increasing Multimodal Convergence

Another central finding of this review is the increasing convergence of modalities in Audio, Music, and Multimodal AI between 2021 and 2025. Early systems were largely unimodal or dual-modal, focusing on isolated processing of speech, music, or text. Audio models primarily analyzed acoustic signals, while Music AI systems processed lyrics and musical features separately. Cross-modal interaction was limited to descriptive alignment, such as text audio retrieval or captioning tasks. Over time, however, architectures evolved toward deeper multimodal integration. The emergence of contrastive learning, shared embedding spaces, and Transformer-based fusion mechanisms enabled joint modeling of text, audio, music, vision, and affect. Systems no longer treated modalities as parallel streams but as interconnected components within unified semantic frameworks. This shift is visible in the progression from dual-modality systems (text audio) to tri- and quad-modality integration (text audio music emotion), and ultimately toward penta- and hexa-modality architectures that incorporate visual context, social signals, and memory.

Importantly, this convergence is not merely technical but epistemic. Multimodal integration reflects a redefinition of intelligence in which meaning emerges through the interaction of heterogeneous signals rather than from a single modality. Language plays a stabilizing role within this convergence, providing structured symbolic grounding that aligns perception, emotion, and contextual reasoning across modalities. By 2025, multimodal systems increasingly operate as integrated ecosystems capable of cross-modal generation, adaptive dialogue, and context-aware synthesis. The trajectory indicates a move from modular AI pipelines toward cognitively inspired architectures in which modalities are dynamically coordinated within shared semantic spaces. This growing multimodal convergence thus reinforces the broader paradigm shift toward meaning-centered, language-mediated artificial intelligence.

- Comparison with Previous Studies

Previous review studies in Audio and Music AI have typically concentrated on specific subdomains or technical perspectives. For example, surveys on music recommendation systems have focused on personalization pipelines and metadata integration, while reviews on sound event detection have emphasized recognition-oriented architectures and benchmark datasets. Other works have examined generative audio models primarily from ethical, governance, or application-driven perspectives [372]. Although these studies provide valuable domain-specific insights, their scope remains largely task-oriented and technically segmented. In contrast, the present review adopts a longitudinal and cross-domain perspective spanning 2021-2025. Rather than examining a single task (e.g., ASR, genre classification, or music generation) or a single paradigm (e.g., contrastive learning or diffusion models) [373], this study integrates Audio AI, Music AI, and Multimodal AI within a unified Domain-Modality-Technique-Task (D-M-T-T) framework. This structure enables systematic comparison across domains and reveals patterns of convergence that are not visible in isolated reviews. Moreover, while previous surveys tend to describe architectural advancements, this review advances a conceptual interpretation of the observed transformation. Specifically, it identifies the elevation of Natural Language Processing as a semantic bridge that mediates perception [280], expression [374], and generation across modalities [123]. By framing the evolution as an epistemic transition from signal-level recognition [375] to meaning-centered multimodal intelligence [376], this study extends beyond technical taxonomy toward theoretical synthesis. Therefore, compared to prior reviews, the contribution of this work lies not only in updated coverage of recent models and datasets, but in providing a unifying explanatory perspective that connects architectural evolution, modality expansion, and semantic integration within a single analytical framework.

- Implications and Theoretical Interpretation

The findings of this review carry significant theoretical implications for the understanding of contemporary Artificial Intelligence in audio and music domains. First, the observed transition from signal-level processing to language-mediated semantic modeling suggests that intelligence in Audio and Music AI can no longer be interpreted solely through the lens of feature extraction and classification. Instead, intelligence increasingly emerges from the interaction between acoustic perception and symbolic linguistic representation. This reconfiguration positions language not as a peripheral annotation layer, but as a structural mechanism for meaning construction. Second, the increasing convergence across Audio AI, Music AI, and Multimodal AI implies a shift from domain-specific modeling toward integrative cognitive architectures. The expansion from unimodal to multi-, and eventually hyper-modal systems indicates that meaning is generated through coordinated cross-modal alignment rather than isolated signal decoding. Theoretically, this suggests that semantic coherence arises from shared representational spaces where linguistic, affective, and perceptual features are jointly embedded. Third, the elevation of NLP as a semantic bridge reframes the epistemic status of sound. Rather than being treated as a purely physical phenomenon, sound is increasingly modeled as a communicative and affective construct embedded within broader symbolic systems. This interpretation supports the view that Audio and Music AI are evolving toward cognitively inspired frameworks in which perception, reasoning, and generation are interlinked through structured language representations. Within the D-M-T-T framework, these developments demonstrate that domain evolution (Audio, Music, Multimodal), modality expansion, architectural innovation, and task diversification are not independent trajectories. Instead, they converge around a shared theoretical axis: the mediation of meaning through language. The principal theoretical implication of this study, therefore, is that the core transformation in Audio and Music AI is epistemic rather than merely technical. It reflects a redefinition of artificial intelligence from signal recognition systems to meaning-centered, multimodal semantic architectures.

- Analysis of Gaps and Challenges