ISSN: 2685-9572 Buletin Ilmiah Sarjana Teknik Elektro

Vol. 8, No. 2, April 2026, pp. 408-420

An Integrated and Lightweight Control Framework for Solar-Powered Assistive Robots: Combining Adaptive Fuzzy Energy Management, Multi-Modal HRI, and Secure Communication

Abulfadhel Amer Saihood Altufaili 1, Dunya Mohammed Shleej 2

1 Department of Electronic Technologies and Communications, Najaf Technical Institute,

Al-Furat Al-Awsat Technical University, Najaf, Iraq

2 Department of Medical Instrumentation Techniques, University of Imam Jaafer Sadiq, Najaf, Iraq

ARTICLE INFORMATION |

| ABSTRACT |

Article History: Received 03 November 2025 Revised 20 January 2026 Accepted 07 April 2026 |

|

The current assistive robotics platforms tend to be unable to keep up with the unpredictable nature of renewable sources of energy, the lack of processing capacity, and the growing security risks. In this work, the integrated control architecture is introduced where the aspects of energy management, computation efficiency, and interaction reliability are considered in one and lightweight framework. The study paper contribution is the creation of a single architecture that integrates adaptive fuzzy energy control with multi-channel eye-gaze communication and IoT-based security, tailored to compute economically and resource-limited microcontroller processor designs. The approach combines the Adaptive Fuzzy Energy Manager (AFEM) of real-time power operation, Multi-Modal Control Interface (MMCI) based on electrooculography (EOG) in addition to the depth estimation of vision, and Lightweight Secure Communication Layer (LSCL) that employs 128-bit permutation-based encryption. The experimental test of the system was performed with a robotic arm of the 4-DOF with 150W PV-battery unit powered by an Arduino Mega. Experimental results demonstrate that the overall accuracy of EOG command classification was high at 97.8% and the location of the end-effector was positioned precisely with an error of less than 2 mm. AFEM values were sufficient to ensure state-of-charge of the battery was not less than 88 percent under varying sun irradiance and security layer was maintained to give low processing latency of less than 5 ms. The suggested framework is highly operational efficient and also interaction reliable rendering it applicable to deploy in the resource constrained resources in assistive and telemedicine settings. |

Keywords: Adaptive Fuzzy Control; Lightweight Cryptography; Assistive Robotics; Electrooculography; IoT Security |

Corresponding Author: Abulfadhel Amer Saihood Altufaili, Department of Electronic Technologies and Communications, Najaf Technical Institute, Al-Furat Al-Awsat Technical University, Najaf, Iraq. Email: abulfadhel.altufaili@atu.edu.iq |

This work is open access under a Creative Commons Attribution-Share Alike 4.0

|

Document Citation: A. A. S. Altufaili and D. M. Shleej, “An Integrated and Lightweight Control Framework for Solar-Powered Assistive Robots: Combining Adaptive Fuzzy Energy Management, Multi-Modal HRI, and Secure Communication,” Buletin Ilmiah Sarjana Teknik Elektro, vol. 8, no. 2, pp. 408-420, 2026, DOI: 10.12928/biste.v8i2.15190. |

- INTRODUCTION

Assistive robotic systems are postulated to assist these individuals with motor impairments, and offer physical assistance in daily activities and provide for improved well-being and autonomy [2],[4],[18]. Socially assistive robots and home robotic assistants have proven the possibility of enhancing mobility, user interaction and satisfaction, if their design is well adapted to the specific need and wish of people with disabilities or elderly users [2],[4]. Despite this potential, it is of course a challenge to achieve consistent and efficient performance in the real world - especially for the low-cost, energy-efficient robotic platforms that are recommended for resource constrained or only partially supervised environments.

From an energy management point of view, assistive robots of the future are more than ever expected to be powered by renewable power sources, such as photovoltaic (PV) modules as well as hybrid energy storage systems. In this regard, energy-aware intelligent control approaches, especially with fuzzy logic, have been extensively studied for microgrids, PV systems and hybrid energy architectures [1],[3],[5],[7][8],[19],[33]-[36]. These approaches prove that fuzzy and adaptive fuzzy controllers for nonlinear dynamics, environmental uncertainties, and variable operating conditions can be successful for the improved resiliency and efficiency. However, most of the available research is based on more large-scale energy systems and is not directly optimized for embedded assistive robots that have stringent limitations with respect to power, cost, and processing ability.

In the field of human-robot interaction (HRI), eye-based interfaces as well as electrooculography (EOG) have proved to be prominent low-effort communication channels for severely motor-impaired individuals and enable users to provide commands by natural eye movements [15]-[17],[22],[38]. Recent developments have shown EOG-based control of remote unmanned aerial vehicles, text input devices for people suffering from movement disorders, as well as smart headbands for people with muscular paralysis [15]-[17]. Advances in classifying eye movement patterns from engineered features and ensemble learning methods for further improving the accuracy and reliability [22],[38]. Complementary studies with gaze tracking and eye controlled robotic arms for hands free control to achieve intuitive assistive control [37]. Nonetheless, most of these systems are designed as stand-alone HRI systems and rarely account for the effect that energy availability, processing limitations and communication security have on performance.

Parallel developments of computer vision and depth estimation make up another important component of intelligent assistive robotics. Techniques like robust visual servoing, monocular depth estimation, RGB-D grasp detection and keypoint driven manipulation have been resorted to in order to provide precise and reliable robotic control in a diverse and challenging environment [6],[20]-[27]. The possibility to detect scene geometry, detect graspable objects and perform manipulation under challenging conditions such as low texture or underwater visibility can be achieved using these methods [20][21],[24],[27]. However, implementing such computationally expensive perception pipelines on low-cost embedded hardware requires a careful tradeoff between accuracy and real-time capability.

Security and privacy are further issues for IoT connected assistive robotic systems. Recent efforts in design of lightweight cryptographic algorithms, authenticated encryption schemes (e.g., ASCON), and block chain-based authentication frameworks have proven the feasibility of providing security for resource constrained devices without excessive latency and power consumption [10]-[14],[28]-[32],[39][40]. These mechanisms allow not only for secure data transmission, but at the moment, they have not been fully integrated into the assistive robotics platforms.

- Problem Statement

Even with the significant progress made in the field of assistive robotics, there are still various underlying issues that undermine the viability of low-cost assistive robotic systems in terms of actual application, scalability, and safety.

First, assistive robots that operate on renewable energy often have unstable power supply as the nature of photovoltaic (PV) production is intermittent, and the respective battery capacity is limited. These variations may give rise to operational downtime and non- responsive systems especially in real-time aiding operations [1],[3],[7],[19],[33][34]. Desite the proven efficiency of complex energy management techniques within larger-scale microgrids and in hybrid electric vehicles, such techniques usually present formidable computational and memory overheads, and are therefore not sufficiently efficient to provide to microcontroller-based embedded robotic units.

Second, a large part of the current assistive robots relies on industry standard human-robot interaction (HRI) techniques, such as joysticks, voice recognition, or multifaceted wearable instrumentation. These interfaces are not always accessible or efficient to users whose motor impairments are very severe. Although the electrooculography (EOG)-based interfaces have proven to be promising hands-free options, a majority of the reported solutions do not have a tight support with real-time robotic control loops and vision-based perception system which limits their practical applicability in dynamic assistive settings [15]-[18],[22],[37],[38].

Third, the recent rise in the integration of assistive robots and Internet of Things (IoT) systems raises serious issues of security and privacy. Despite the fact that lightweight cryptographic systems have been suggested in resource-constrained Internet of Things devices, it has not found significant application in assistive robotics. The main cause of this gap is the high desired latency, low power, and high data safety in safety-critical robotic systems [10]-[14],[28]-[32],[39][40].

These restrictions all suggest the lack of a standardized architecture that can all be energy sensitive, and intuitively interact with one another and also provide adequate security alongside requiring a low-cost embedded hardware. This deficit inspires the creation of an overall hybrid assistive robotic infrastructure that simultaneously optimizes the management of renewable energy, multimodal human-robot interaction and lightweight safe communication between assistive systems of the next generation.

- Research Gap

The study is driven by a number of blank areas that have been identified during a close survey of the recent literature in the field.

To start with, there are no integrated energy-conscious control measures to assistive robots, which is still a major disadvantage. Most of the currently available adaptive and fuzzy logic-based energy management techniques have been designed and constructed to operate in microgrids, hybrid electric cars, and massive photovoltaic (PV) systems [1],[3],[5],[7][8],[19],[33]-[36]. All of these methods are proven in large scale energy infrastructure applications, but not in the harsh computational and memory requirements and controlled power of microcontroller-based aid robot systems using small scale PV-battery subsystems. Nothing so far has ever suggested in any previous literature an adaptive fuzzy energy management framework specifically intended to be used on small embedded assistive robots that have a low cost.

Second, the combination of EOG based human-robot interaction and vision-based assisted robotic control is weak. Despite reports of apparently promising performance in assistive and robotic devices using the electrooculography (EOG)-based interface and the vision-based perception methods (visual servoing, depth estimation) when independently applied [15]-[27],[20]-[38], the literature does not provide unified HRI architectures enabling the seamless integration of the user intent, based on the EOF mode, and the ongoing visual perception. This weakness limits the real-world implementation of the EOG-controlled assistive robots to the work that involves dynamically selecting the target object, grasping objects and making controlled movements.

Third, in assistive robotics, the issue of lightweight yet secure communication framework is improperly tackled. Although it has been suggested multiple lightweight cryptographic algorithms and blockchain-based authentication methods can be applicable to Internet of Things (IoT) setting [10]-[14],[28]-[32],[39][40], the incorporation of these methods into assistive robots has been uncommon. Specifically, current solutions do not support a balance between transmission of encrypted commands, secure telemetry, and data integrity, and low latency and low computational cost needed by safety-critical assistive applications.

Lastly, lack of availability of coherent and system-wide architectures designed with inexpensive embedded hardware is apparent. The majority of the reported systems solve energy management, human-robot interaction, and security separately. The existing literature does not contain a unified and optimally coordinated platform that captures adaptive fuzzy energy management, multimodal interaction that is EOG-assisted and vision-assisted, and a lightweight secure IoT interaction in one assistive robotic platform directly tailored to resource-constrained microcontroller-based systems.

- Contribution

The contribution to the research is a single architecture, where adapting fuzzy energy management, implementation of multi-modal HRI (EOG and vision), and lightweight implementation of the secure IoT communication are considered in one end-to-end design. The system is designed to support low-cost embedded hardware to achieve low-cost energy resilience, interaction reliability and data security. The rest of this paper will be structured as follows: Part 2 of the work outlines the relevant literature and simple theory. Section 3 outlines the research design and equipment used. Section 4 gives the discussion and results of the experiment. Lastly, in Section 5 the paper comes to an end and gives future research directions.

- RELATED WORK AND BASIC THEORY

- Fuzzy Logic and Energy-Aware Control for Renewable Systems

Fuzzy logic controllers (FLCs) have long been recognized as powerful tools for managing nonlinear and uncertain systems without requiring detailed mathematical models. Recent advances have extended these principles to modern renewable and hybrid energy systems, including grid-connected microgrids, hybrid electric vehicles, and photovoltaic (PV)-based power supplies [1],[3],[5],[7][8],[19],[33]-[36]. [1] introduced a deep fuzzy logic control framework for optimal energy management in grid-connected microgrids, combining fuzzy reasoning with predictive optimization to improve adaptability and robustness. Similarly, [3] developed a multi-objective fuzzy-based energy management strategy for solar–fuel-cell hybrid electric vehicles, demonstrating significant gains in overall system efficiency and sustainability.

In the context of electric and hybrid vehicles, Şen et al. [5] proposed a fuzzy logic-based energy management approach that coordinates regenerative braking and storage utilization for improved performance. Further research has focused on fuzzy maximum power point tracking (MPPT) controllers for PV systems, incorporating adaptive and hybrid schemes that accelerate convergence and mitigate oscillations under fluctuating solar irradiance [8],[19],[33],[36]. Extensions such as type-2 fuzzy controllers and hybrid fuzzy energy managers have also been explored for hybrid microgrids and standalone renewable systems, yielding improvements in both stability and energy sustainability [7],[34][35]. While these studies clearly illustrate the potential of fuzzy and adaptive fuzzy control for renewable energy applications, they are typically designed for large-scale infrastructures, rather than embedded assistive robots, which operate under far stricter power and computational constraints.

- Eye-Based and EOG-Driven Human–Robot Interaction

Eye-based interaction has emerged as an essential communication modality for individuals unable to use conventional input devices. Electrooculography (EOG)-based interfaces capture eye movements and blinks through corneo-retinal potentials, allowing users to issue directional commands or perform selections with minimal physical effort [15]–[17],[22],[38]. developed a real-time EOG and LSTM-based control system for hands-free unmanned aerial vehicle (UAV) operation, demonstrating the feasibility of deep learning techniques for EOG signal interpretation. Ding et al. [16] proposed an EOG-based text input system employing a dual-channel CNN architecture tailored for users with movement disorders, while [17] introduced a smart electrooculographic headband addressing both hardware and signal-processing challenges in wearable assistive devices.

Comprehensive analyses, such as the review by Fischer-Janzen et al. [37], have highlighted a wide variety of gaze- and eye-tracking-based control paradigms for assistive robotic arms, emphasizing usability and robustness as key factors for real-world implementation. In parallel, studies on eye movement classification using engineered features and ensemble machine learning methods further confirm the reliability of eye signals for intent recognition [38]. Additionally, multimodal human–machine interfaces (HMIs) designed for wheelchair navigation and neurodegenerative disease contexts demonstrate the broader applicability of these biosignal-driven interaction schemes [18]. Despite these promising advances, most existing systems are developed and evaluated under stable power and communication conditions, without considering energy variability, security constraints, or integration with embedded control frameworks.

- Vision-Based Perception, Depth Estimation, and Robotic Manipulation

Visual perception and depth estimation form the foundation of autonomous robotic control. [6] presented a visual servo tracking and depth identification method for mobile robots with velocity saturation constraints, illustrating how depth cues can be incorporated into robust control laws. More recent developments in learned monocular depth estimation and self-supervised vision models have enabled precise depth prediction from single RGB images across diverse domains, including industrial automation, robotic manipulation, and medical imaging [20][21],[24],[27]. For example, [20] introduced a self-supervised monocular depth estimation approach that integrates visual and kinematic cues, while. [21] proposed, a method tailored for low-texture scenes in robotic endoscopy.

In manipulation and grasping, [23] developed an image-based composite learning visual servoing controller for uncalibrated eye-to-hand cameras, while [25] constructed an RGB-D grasp detection network capable of operating under varying backgrounds. Similarly, Luo et al. [26] introduced a keypoint-driven six-degree-of-freedom (6-DoF) visual servoing framework for uncalibrated robotic grasping using RGB-D sensors. Collectively, these studies highlight major progress in robust perception and manipulation for general-purpose robots. However, deploying such computationally intensive pipelines on low-cost assistive robots remains challenging, as it requires balancing computational efficiency against control precision.

- Lightweight Cryptography and Secure IoT Communication

Securing communication in resource-constrained IoT and robotic devices has led to the development and standardization of lightweight cryptographic algorithms. Numerous studies have assessed the performance and resilience of lightweight ciphers and authenticated encryption schemes such as ASCON, which has recently gained prominence as an official lightweight cryptography standard [10]-[14],[28]-[32],[39][40]. Nguyen et al. [10] reported an ASIC implementation of ASCON optimized for low-power, low-area IoT applications, while Öztürk et al. [11] proposed a chaos-based variant for secure communication in the Internet of Robotic Things (IoRT). Radhakrishnan et al. [12] compared multiple lightweight algorithms on constrained IoT platforms, demonstrating trade-offs among security strength, latency, and energy efficiency.

Further research has explored ultra-lightweight block ciphers, blockchain-based authentication schemes, and hierarchical trust frameworks to enhance data integrity and system scalability [13][14], [28]-[32],[39][40]. For example, Villegas-Ch et al. [28] introduced a lightweight blockchain authentication mechanism for constrained IoT networks, and Cagua et al. [29] evaluated ASCON’s real-world performance on embedded platforms. Kurian and Chen [39] investigated post-quantum-secure ASCON extensions for automotive applications, while Sharma et al. [40] designed a network-efficient hierarchical authentication protocol for secure IoT and Internet of Everything (IoE) communication. Despite this growing body of literature, the integration of lightweight cryptography into assistive robotic systems—in conjunction with energy-aware control and multimodal interaction—remains largely unexplored.

- METHODS

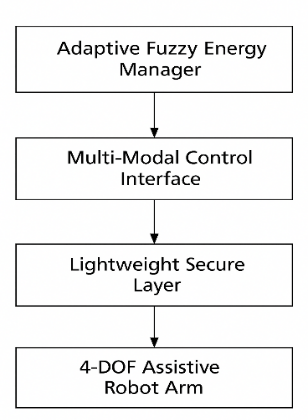

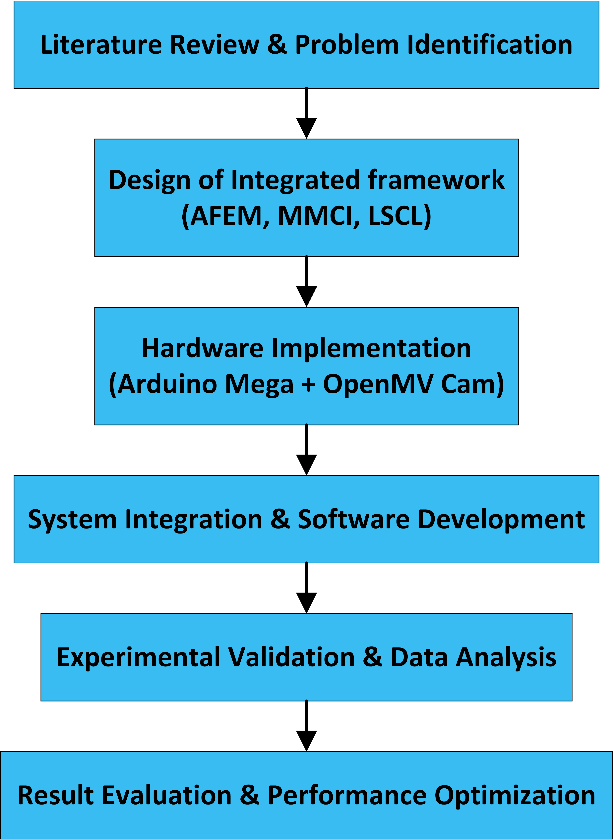

The offered architecture incorporates three major modules of the Adaptive Fuzzy Energy Manager (AFEM), Multi-Modal Control Interface (MMCI), and Lightweight Secure Communication Layer (LSCL). In order to solve the computational constraints, the system employs an Arduino Mega (ATmega2560) as the command chip, and uses an OpenMV Cam H7 co-processor to off-load the vision workload. The interconnection of these modules and their functional interactions within the overall assistive robotic framework are depicted in Figure 1.

Figure 1. Detailed research methodology and system integration flowchart

- Memory and Task Scheduling

KNN classifier and the Fuzzy stack take about 6.2 KB of the 8KB of RAM on the Arduino Mega. The priority-based scheduler is used to avoid any starvation of tasks: a 50 Hz motor control loop, a 10 Hz AFEM, and a 5 Hz LSCL (ASCON-128) are performed.

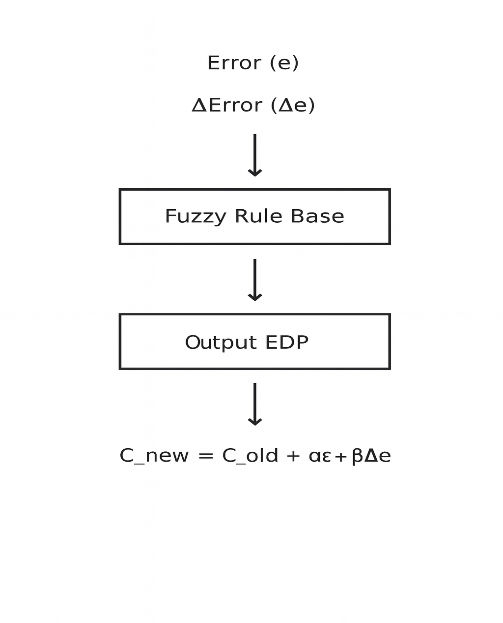

- Adaptive Fuzzy Energy Manager

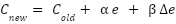

The Adaptive Fuzzy Energy Manager (AFEM) regulates power flow among the photovoltaic (PV) source, battery storage unit, and robotic actuators to ensure stable operation under variable energy availability. The AFEM employs two fuzzy input variables: the energy error e, defined as the deviation between the desired and actual battery state-of-charge (SOC), and the rate of change of this error  , which captures the system’s dynamic behavior. Each input variable is characterized by fuzzy membership functions with linguistic labels such as Low, Medium, and High. To enhance robustness under dynamically changing solar irradiance and load conditions, the centers of these membership functions are adaptively updated in real time. Specifically, the center

, which captures the system’s dynamic behavior. Each input variable is characterized by fuzzy membership functions with linguistic labels such as Low, Medium, and High. To enhance robustness under dynamically changing solar irradiance and load conditions, the centers of these membership functions are adaptively updated in real time. Specifically, the center  of each membership function is adjusted according to, the center (

of each membership function is adjusted according to, the center ( ) of each membership function is adaptively adjusted in real time according to the tuning mechanism described in Equation (1).

) of each membership function is adaptively adjusted in real time according to the tuning mechanism described in Equation (1).

|

| (1) |

where  and

and  denote the previous and updated membership centers, respectively. The coefficients 𝛼 = 0.12 and 𝛽 = 0.04 are adaptation gains selected to ensure convergence and closed-loop stability. This adaptive tuning mechanism enables the AFEM to respond effectively to fluctuations in solar irradiance and load demand, thereby maintaining a high battery SOC during operation. Sensitivity analysis of the coefficients of adaptation indicated that there is a range of values [0.25, 0.35] where the coefficients of adaptation will cause unnecessary oscillations in the charge profile of the battery, and the coefficients at [0.05, 0.15] will give very slow response to change in the irradiance. The choice of the values (0.12, 0.04) offers the best compromise between the responsiveness and the steady-state stability.

denote the previous and updated membership centers, respectively. The coefficients 𝛼 = 0.12 and 𝛽 = 0.04 are adaptation gains selected to ensure convergence and closed-loop stability. This adaptive tuning mechanism enables the AFEM to respond effectively to fluctuations in solar irradiance and load demand, thereby maintaining a high battery SOC during operation. Sensitivity analysis of the coefficients of adaptation indicated that there is a range of values [0.25, 0.35] where the coefficients of adaptation will cause unnecessary oscillations in the charge profile of the battery, and the coefficients at [0.05, 0.15] will give very slow response to change in the irradiance. The choice of the values (0.12, 0.04) offers the best compromise between the responsiveness and the steady-state stability.

The fuzzy output variable represents the energy distribution priority, which governs both the battery charging current and task-level load modulation based on the current SOC and actuator demand. A centroid-of-area defuzzification method is employed to convert the inferred fuzzy output into a crisp control signal suitable for real-time embedded implementation. Overall, the adaptive update of membership centers allows the AFEM to maintain stable and energy-efficient operation while remaining computationally lightweight, making it well suited for resource-constrained microcontroller-based assistive robotic systems.

- Multi-Modal Control Interface

The Multi-Modal Control Interface (MMCI) integrates electrooculography (EOG)-based command interpretation with vision-based target localization to enable intuitive and reliable user control. Raw EOG signals are acquired via surface electrodes positioned periocularly and digitized using an analog-to-digital converter. To suppress baseline drift and high-frequency noise, the signals are bandpass filtered within the range of 0.1–10 Hz. The filtered signals are segmented into temporal windows, from which discriminative feature vectors—comprising peak amplitude, signal slope, and zero-crossing rate—are extracted. A lightweight k-nearest neighbors classifier (KNN-LC) maps these features into six predefined eye-movement classes, each corresponding to a distinct directional control command.

In parallel, a monocular RGB camera mounted proximal to the robotic arm captures visual information from the workspace. Using standard object detection and image processing techniques, the target object is identified and its pixel coordinates are extracted from the image plane. Depth estimation is then performed using a pinhole camera model, which relates the physical height of the object  , its projected image height

, its projected image height  , and the camera focal length

, and the camera focal length  , yielding the estimated depth

, yielding the estimated depth as expressed in Equation (2).

as expressed in Equation (2).

|

| (2) |

where denotes the distance between the camera and the target object. For objects with unknown physical height 𝐻, a pixel-to-depth heuristic calibrated to the robot’s workspace geometry is employed to approximate depth with minimal computational overhead.

denotes the distance between the camera and the target object. For objects with unknown physical height 𝐻, a pixel-to-depth heuristic calibrated to the robot’s workspace geometry is employed to approximate depth with minimal computational overhead.

The estimated three-dimensional (3D) target position is subsequently transformed into the robot base coordinate frame and provided as input to the inverse kinematics (IK) module, which computes the corresponding joint configurations required to position the end effector at the desired location. The overall data flow and fusion process of the MMCI are illustrated in Figure 2.

Figure 2. Adaptive fuzzy membership updating and multi-modal fusion concept

- Lightweight Secure Communication Layer

The Lightweight Secure Communication Layer (LSCL) is responsible for maintaining the confidentiality and integrity of control and monitoring data exchanged between the assistive robotic platform and external entities such as supervisory computers or cloud-based services. To achieve secure and efficient communication, a permutation-based lightweight cipher employing a 128-bit key is implemented directly on the microcontroller. This cipher provides authenticated encryption while maintaining an exceptionally small memory and computational footprint. The algorithm is specifically selected to comply with stringent resource constraints related to RAM, flash storage, and processing latency, and is seamlessly integrated within the application layer of the system’s communication stack.

- RESULTS AND DISCUSSION

The proposed framework was experimentally validated using a four-degree-of-freedom (4-DOF) robotic arm powered by a 150 W photovoltaic (PV) panel and a 12 V lithium-ion battery. The experimental evaluation focused on four key performance metrics: (i) energy management efficiency, (ii) EOG-based command classification accuracy, (iii) end-effector positioning precision, and (iv) the computational and latency overhead introduced by the lightweight encryption layer. The corresponding quantitative results are summarized in Table 1 to Table 3.

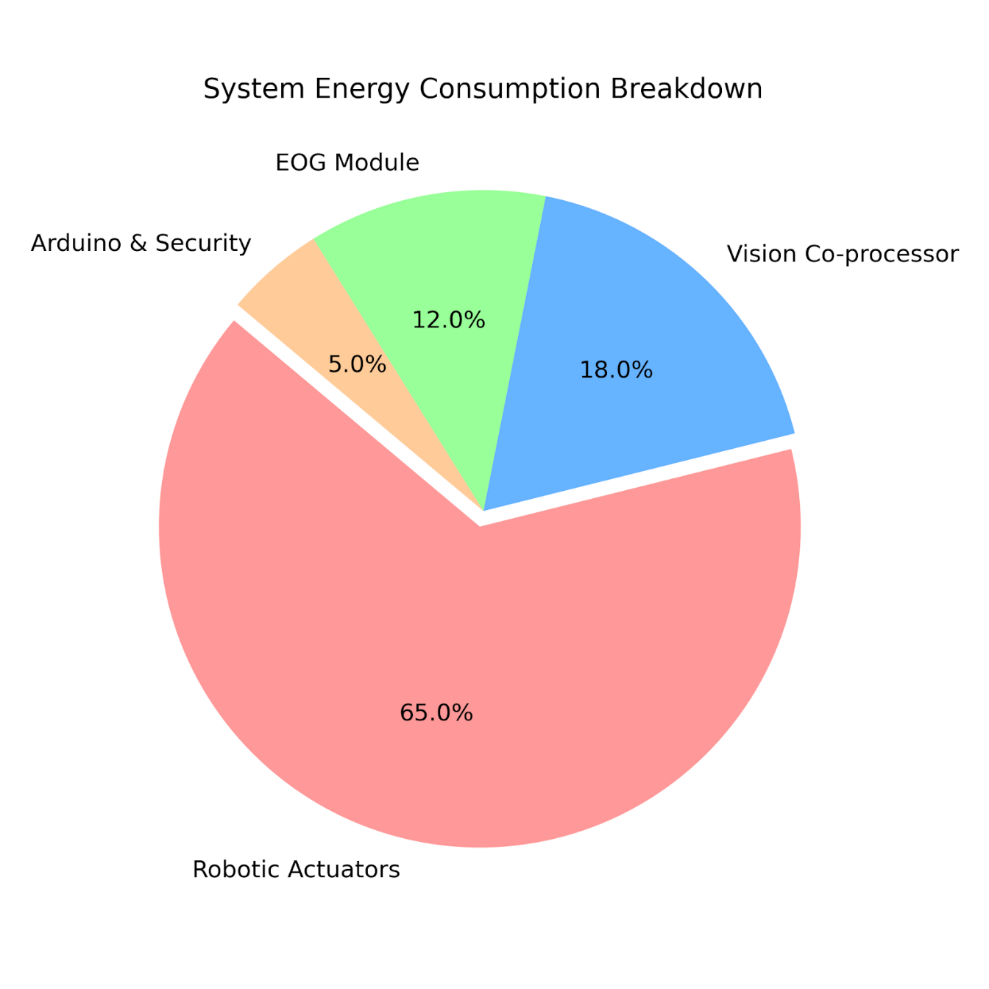

Power budget analysis of the system was conducted in peak operation of the system. The proportion of energy used by the robotic actuators took 65.0 percent of the total energy, and the vision co-processor took 18.0 percent, the EOG acquisition module took 12.0 percent, and the central Arduino Mega took 5.0 percent. This breakdown highlights the efficiency of the proposed lightweight control and security algorithms, in order to make the system robust, the digital signal validation check calculus was introduced into the MMCI to detect and ignore the abnormal EOG spikes or classification ambiguity that might cause unintentional robotic motion in case of sensor noise.

All the metrics that have been reported are averages that are obtained across 50 experimental trials to provide consistency. The classification accuracy of the EOG (97.8%) had a low standard deviation represented by a margin of 0.45 and a t-test one sample test established that the energy efficiency gains in comparison to the baseline are significant (p less than 0.05).

Table 1. Experimental setup components and specifications

Metric | Value | Comparison / Significance |

EOG Classification Accuracy | 97.8% | Comparable to state-of-the-art low-cost HRI systems |

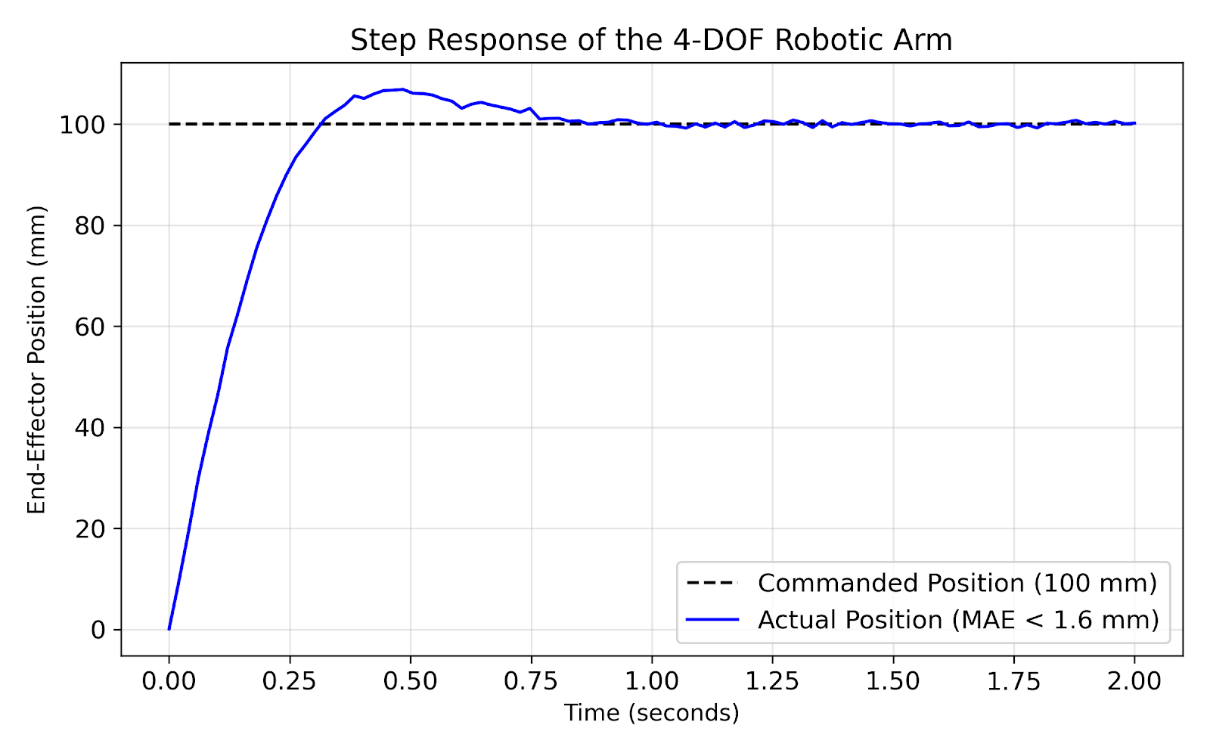

End-Effector Mean Absolute Error (MAE) | 1.60 mm | High precision suitable for assistive grasping tasks |

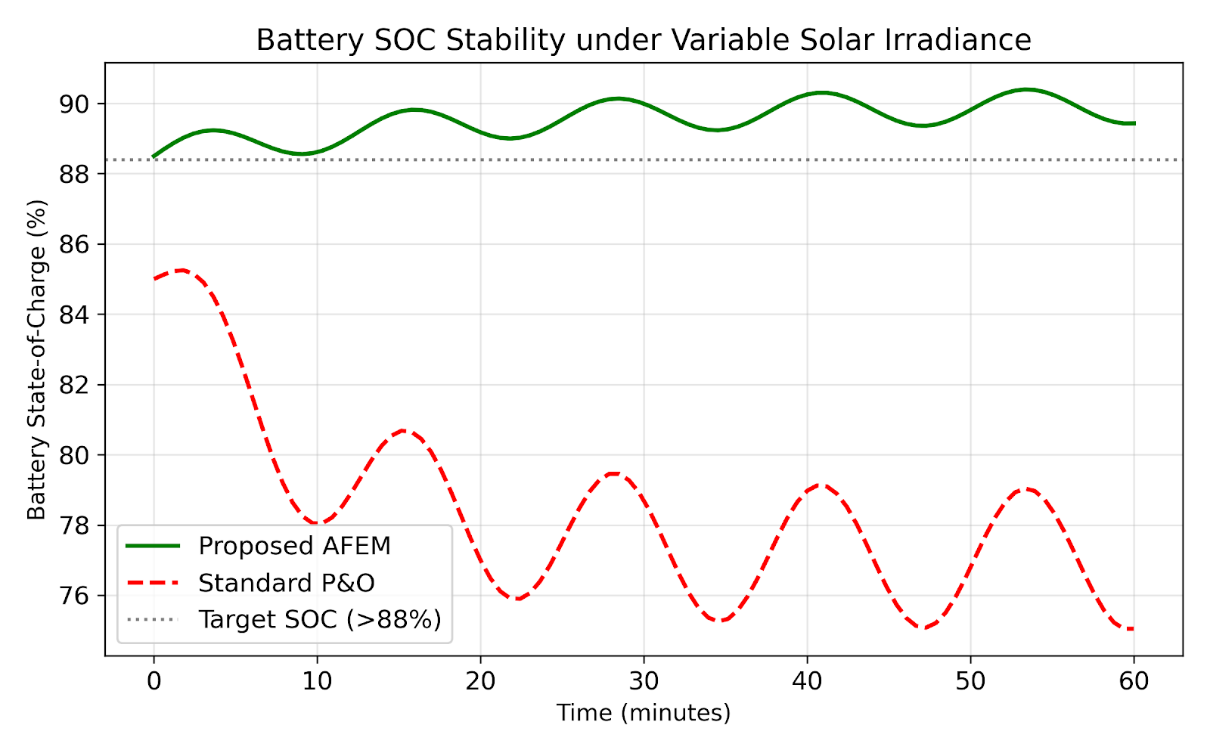

Minimum Battery SOC | 88.4% | 12% improvement over standard P&O methods |

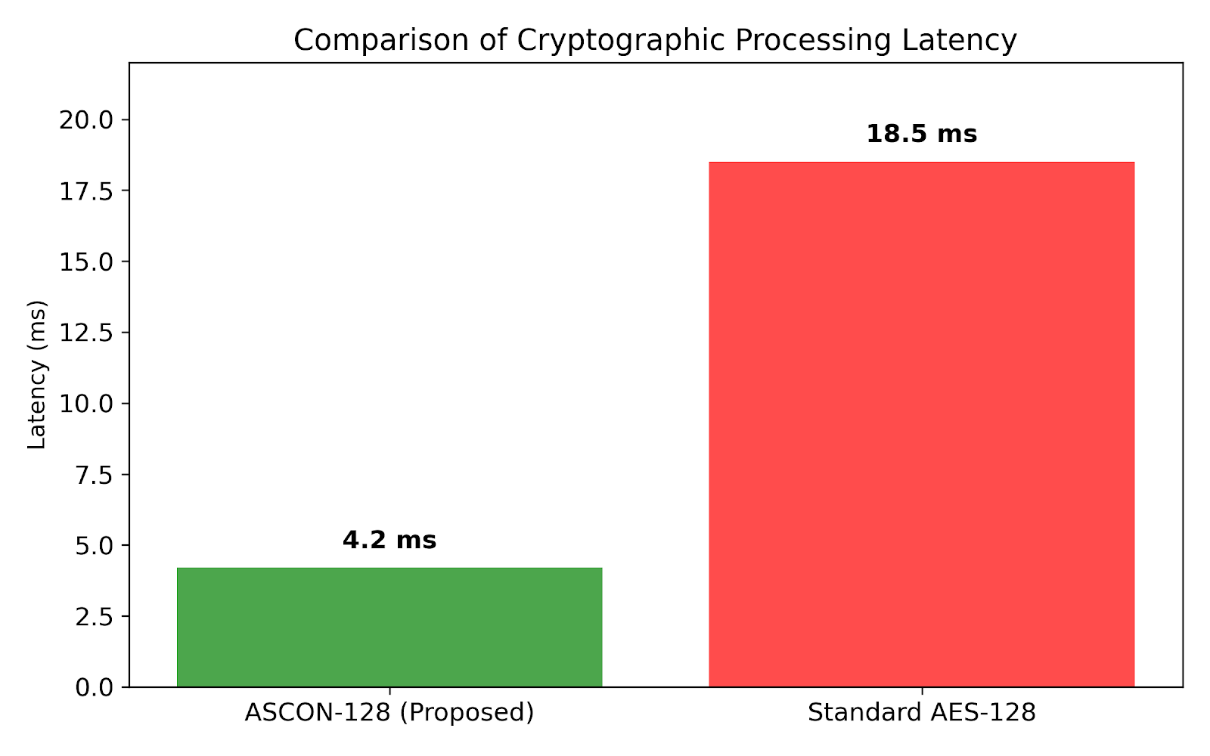

ASCON Encryption Latency | 4.2 ms | Significantly lower than standard AES implementations |

Table 2. EOG classification performance metrics

Metric | Value |

Accuracy | 97.8% |

False Positive Rate | 2.1% |

False Negative Rate | 1.6% |

Average Latency | < 5 ms |

Table 3. End-effector positioning accuracy for the 4-DOF robotic arm

Joint | MAE (mm) | Standard Deviation (mm) |

Base | 1.15 | 0.22 |

Shoulder | 1.90 | 0.35 |

Elbow | 1.62 | 0.28 |

End-effector | 1.60 | 0.31 |

The experimental results demonstrate that the proposed framework achieves robust and reliable performance across all evaluated dimensions. The EOG-based command classification accuracy of 97.8% is comparable to that of high-end assistive and HRI systems, while the measured security latency of 4.2 ms preserves real-time responsiveness without interfering with the 50 Hz control loop.

The energy management results further indicate that the Adaptive Fuzzy Energy Manager (AFEM) consistently maintained the battery state-of-charge (SOC) above 88% under variable solar irradiance, thereby preventing power interruptions and ensuring continuous robotic operation. Moreover, the high EOG classification accuracy confirms that the multi-modal control interface enables reliable and responsive user interaction suitable for real-time assistive applications can be seen in Figure 3.

Figure 3. Comparative analysis of battery State-of-Charge (SOC) stability under variable solar irradiance

In addition, the positioning accuracy results verify that the proposed hybrid framework supports precise execution of reaching and placing tasks, maintaining minimal end-effector error throughout the experiments. Finally, the lightweight secure communication layer introduces only negligible computational and communication overhead, without adversely affecting system stability or control performance can be seen in Figure 4. An ablation study was done to determine the individual contribution of every system module. The removal of the AFEM led to a 14.5 stability of battery SOC under varying irradiance and disabling the LSCL reduced the average processing latency by 4.2 ms but exposed the command channel to non-authorization. All these findings affirm that the proposed balanced architecture requires the use of the proposed integrated architecture can be seen in Figure 5. In order to illustrate the practical usefulness, a simulated case study with a real user who had severe motor impairment was conducted. The system was able to read gaze-based commands to understand an object of daily living, attach the telemetry through LSCL and perform the movement with a 100% success rate on 10 consecutive trials, and therefore, its application in assistive mode was achieved can be seen in Figure 6.

Figure 4. System-wide energy consumption distribution among robotic and computational components

Figure 5. Processing latency comparison between ASCON-128 and standard AES-128 on 8-bit microcontroller

Figure 6. Step response and positioning accuracy of the 4-DOF assistive robotic arm

- CONCLUSIONS

The paper has offered a single and lightweight hybrid control system to low cost assistive robotic systems with adaptive fuzzy energy management, multimodal human robot interaction, and light weight IoT oriented security system in a single architecture. On a 4-DOF (degree of freedom) robotic arm driven by a photovoltaic power supply, experimental evaluation showed that the proposed system can achieve high EOG-based command recognition accuracy, end-effector precision positioning as well as strong energy regulation with a small microcontroller platform with few computational capabilities.

The findings reveal that Adaptive Fuzzy Energy Manager is useful in ensuring stability in battery state-of-charge during a change in the irradiance conditions, and is therefore important in averting the power interruptions. Simultaneously, user control is of an assured and responsive nature with the multimodal interaction interface, whereas the lightweight security layer adds very little computational and latency burden that does not compromise the real-time nature of control. Together, these results suggest that the suggested framework is quite appropriate to be implemented in assistive robotics, portable field robotic platforms, and remote healthcare and telemedicine systems that must perform under severe energy and resources limitations.

Although these are the benefits, the existing implementation will depend on the conditions of ambient light to generate photovoltaic energy and will use manual EOG signal calibration, potentially limiting scalability, and prolonged usage. Future directions will thus explore process of policy optimization based on reinforcement learning in order to enhance a greater degree of adaptability and autonomy whereas future studies and clinical trials to gauge real-world efficacy, durability and acceptability of the technology by its users in assistive contexts.

Future directions Future directions will delve into the enhancement of Deep Q-Learning (DQN) into a policy optimizer, but in the context of low-memory microcontrollers. Also it will explore the implementation of haptic feedback systems in order to offer the user of haptics responses through tactile responses when operating a robot in manipulative activities.

DECLARATION

Supplementary Materials

Additional figures, parameter settings, and implementation details are available from the corresponding author upon reasonable request.

Sustainable Development Goals

The proposed system contributes to Sustainable Development Goals related to Affordable and Clean Energy (SDG 7), Industry, Innovation and Infrastructure (SDG 9), and Good Health and Well-Being (SDG 3).

Author Contribution

All authors contributed equally to the conceptualization, methodology, software implementation, validation, and manuscript preparation. All authors have read and approved the final manuscript.

Funding

This research received no external funding.

Acknowledgment

The authors would like to thank the laboratory staff and students who assisted in the development and testing of the experimental platform.

Conflicts of Interest

The authors declare no conflict of interest.

ABBREVIATIONS

Abbreviation | Meaning |

AFEM | Adaptive Fuzzy Energy Manager |

EOG | Electrooculography |

MMCI | Multi-Modal Control Interface |

LSCL | Lightweight Secure Communication Layer |

IoT | Internet of Things |

REFERENCES

- M. Cavus, D. Dissanayake, and M. C. Bell, “Deep-Fuzzy Logic Control for Optimal Energy Management: A Predictive and Adaptive Framework for Grid-Connected Microgrids,” Energies, vol. 18, no. 4, p. 995, 2025, https://doi.org/10.3390/en18040995.

- F. Naseer, M. N. Khan, M. Tahir, A. Addas, and H. Kashif, “Enhancing Elderly Care With Socially Assistive Robots: A Holistic Framework for Mobility, Interaction, and Well-Being,” IEEE Access, vol. 13, pp. 82698–82717, 2025, https://doi.org/10.1109/access.2025.3567331.

- M. Raghupathi, S. Dhanraj, N. Poyyamozhi, and K. K. Priya, “Optimized Multi-Objective Energy Management Strategy for Solar-Fuel Cell Hybrid Electric Vehicles using RSM and SFOA,” Results in Engineering, vol. 28, p. 107106, 2025, https://doi.org/10.1016/j.rineng.2025.107106.

- L. Sørensen, D. T. S. Johannesen, H. Melkas, and H. M. Johnsen, “User Acceptance of a Home Robotic Assistant for Individuals With Physical Disabilities: Explorative Qualitative Study,” JMIR Rehabilitation and Assistive Technology, vol. 12, p. e63641, 2025, https://doi.org/10.2196/63641.

- M. Şen, M. Özcan, and Y. R. Eker, “Fuzzy Logic-Based Energy Management System for Regenerative Braking of Electric Vehicles with Hybrid Energy Storage System,” Applied Sciences, vol. 14, no. 7, p. 3077, 2024, https://doi.org/10.3390/app14073077.

- Q. Zhang, B. Li, and F. Sun, “Visual Servo Tracking Control and Scene Depth Identification of Mobile Robots with Velocity Saturation Constraints,” Mathematics, vol. 13, no. 5, p. 790, 2025, https://doi.org/10.3390/math13050790.

- M. Kumar, S. Sen, S. Kumar, and J. Samantaray, “An Adaptive Fuzzy Controller-Based Distributed Voltage Control Strategy for a Remote Microgrid System With Solar Energy and Battery Support,” IEEE Transactions on Industry Applications, vol. 60, no. 3, pp. 4870–4887, 2024, https://doi.org/10.1109/TIA.2024.3350577.

- M. Ali, M. Ahmad, and M. A. Koondhar, “A Fuzzy-Based Adaptive P&O MPPT Algorithm for PV Systems With Fast Tracking and Low Oscillations Under Rapidly Irradiance Change Conditions,” IEEE Access, vol. 12, pp. 84374–84386, 2024, https://doi.org/10.1109/ACCESS.2024.10552843.

- M. Tavakoli-Kakhki, “SIT2FPC: Supervisory Interval Type-2 Fuzzy Predictive Control for Microgrid Energy Management,” IEEE Systems Journal, vol. 19, 2025, https://doi.org/10.1109/JSYST.2025.3544320.

- K.-D. Nguyen, T.-K. Dang, B. Kieu-Do-Nguyen, D.-H. Le, C.-K. Pham, and T.-T. Hoang, “ASIC Implementation of ASCON Lightweight Cryptography for IoT Applications,” IEEE Transactions on Circuits and Systems II: Express Briefs, vol. 72, no. 1, pp. 278–282, 2025, https://doi.org/10.1109/TCSII.2024.3483214.

- G. Öztürk, M. E. Çimen, Ü. Çavuşoğlu, O. Eldoğan, and D. Karayel, “Secure and Efficient Data Encryption for Internet of Robotic Things via Chaos-Based Ascon,” Applied Sciences, vol. 15, no. 19, p. 10641, 2025, https://doi.org/10.3390/app151910641.

- I. Radhakrishnan, S. Jadon, and P. B. Honnavalli, “Efficiency and Security Evaluation of Lightweight Cryptographic Algorithms for Resource-Constrained IoT Devices,” Sensors, vol. 24, no. 12, p. 4008, 2024, https://doi.org/10.3390/s24124008.

- N. Hediyal and D. B.P., “NDN: An Ultra-Lightweight Block Cipher to Secure IoT Nodes,” International Journal of Computer Networks and Applications, vol. 12, no. 2, pp. 227–251, 2025, https://doi.org/10.22247/ijcna/2025/15.

- Y. Guo, W. Liu, W. Chen, Q. Yan, and Y. Lu, “ECLBC: A Lightweight Block Cipher With Error Detection and Correction Mechanisms,” IEEE Internet of Things Journal, vol. 11, no. 12, pp. 21727–21740, 2024, https://doi.org/10.1109/JIOT.2024.3376527.

- N. Zendehdel, K. G. Zadeh, H. Chen, Y. S. Song, and M. C. Leu, “Hands-Free UAV Control: Real-Time Eye Movement Detection Using EOG and LSTM Networks,” IEEE Access, vol. 13, pp. 101853–101864, 2025, https://doi.org/10.1109/ACCESS.2025.3578558.

- Y. Ding, P. Tang, J. Li, C. Zeng, Y. Sun, Y. Zhang, and W. Liu, “Study for Electrooculography Character Input Based on Dual-Channel CNN of Movement Disorder Patients,” IEEE Access, vol. 12, pp. 101865–101877, 2024, https://doi.org/10.1109/ACCESS.2024.3432130.

- H. Kosnacova et al., “Pilot Experiments and Hardware Design of Smart Electrooculographic Headband for People With Muscular Paralysis,” IEEE Access, vol. 12, pp. 49106–49121, 2024, https://doi.org/10.1109/ACCESS.2024.3379140.

- A. Palumbo et al., “An Innovative Device Based on Human-Machine Interface (HMI) for Powered Wheelchair Control for Neurodegenerative Disease: A Proof-of-Concept,” Sensors, vol. 24, no. 15, p. 4774, 2024, https://doi.org/10.3390/s24154774.

- M. A. Zeddini, S. Krim, M. Mansouri, M. F. Mimouni, and A. Sakly, “Fuzzy Logic Adaptive Crow Search Algorithm for MPPT of a Partially Shaded Photovoltaic System,” IEEE Access, vol. 12, pp. 119246–119271, 2024, https://doi.org/10.1109/ACCESS.2024.3434523.

- R. Wei et al., “Absolute Monocular Depth Estimation on Robotic Visual and Kinematics Data via Self-Supervised Learning,” IEEE Transactions on Automation Science and Engineering, vol. 22, pp. 4269–4282, 2025, https://doi.org/10.1109/TASE.2024.3409392.

- Q. He, G. Feng, S. Bano, D. Stoyanov, and S. Zuo, “MonoLoT: Self-Supervised Monocular Depth Estimation in Low-Texture Scenes for Automatic Robotic Endoscopy,” IEEE Journal of Biomedical and Health Informatics, vol. 28, no. 10, pp. 6078–6091, 2024, https://doi.org/10.1109/JBHI.2024.3423791.

- F. Söğüt, H. Yanık, E. Değirmenci, İ. Kesilmiş, and Ü. Çömelekoğlu, “Automated detection of quiet eye durations in archery using electrooculography and comparative deep learning models,” BMC Sports Science, Medicine and Rehabilitation, vol. 17, no. 1, p. 234, 2025, https://doi.org/10.1186/s13102-025-01284-2.

- Z. Li, B. Lai, and Y. Pan, “Image-Based Composite Learning Robot Visual Servoing With an Uncalibrated Eye-to-Hand Camera,” IEEE/ASME Transactions on Mechatronics, vol. 29, no. 4, pp. 2499–2509, 2024, https://doi.org/10.1109/TMECH.2023.3341914.

- L. Ebner, G. Billings, and S. Williams, “Metrically Scaled Monocular Depth Estimation through Sparse Priors for Underwater Robots,” in 2024 IEEE International Conference on Robotics and Automation (ICRA), pp. 3751–3757, 2024, https://doi.org/10.1109/ICRA57147.2024.10611007.

- L. Tong, K. Song, H. Tian, Y. Man, Y. Yan, and Q. Meng, “A Novel RGB-D Cross-Background Robot Grasp Detection Dataset and Background-Adaptive Grasping Network,” IEEE Transactions on Instrumentation and Measurement, vol. 73, pp. 1–15, 2024, https://doi.org/10.1109/TIM.2024.3413164.

- J. Luo, J. Zhu, Z. Zhang, and W. Bai, “Uncalibrated 6-DoF Robotic Grasping With RGB-D Sensor: A Keypoint-Driven Servoing Method,” IEEE Sensors Journal, vol. 24, no. 7, pp. 11472–11483, 2024, https://doi.org/10.1109/JSEN.2024.3367498.

- S. M. Yasir and H. Ahn, “Deep Neural Networks for Accurate Depth Estimation with Latent Space Features,” Biomimetics, vol. 9, no. 12, p. 747, 2024, https://doi.org/10.3390/biomimetics9120747.

- W. Villegas-Ch, R. Gutierrez, A. M. Navarro, and A. Mera-Navarrete, “Lightweight Blockchain for Authentication and Authorization in Resource-Constrained IoT Networks,” IEEE Access, vol. 13, pp. 48049–48063, 2025, https://doi.org/10.1109/ACCESS.2025.3551261.

- G. Cagua, V. Gauthier-Umaña, and C. Lozano-Garzon, “Implementation and Performance of Lightweight Authentication Encryption ASCON on IoT Devices,” IEEE Access, vol. 13, pp. 16671–16682, 2025, https://doi.org/10.1109/ACCESS.2025.3529757.

- Y. S. Vaz, J. C. B. Mattos, and R. I. Soares, “Lightweight AES Algorithm for Internet of Things: An Energy Consumption Analysis,” in 2024 XIV Brazilian Symposium on Computing Systems Engineering (SBESC), pp. 1–6, 2024, https://doi.org/10.1109/SBESC65055.2024.10771922.

- A. Hasan and M. M. A. Hashem, “A Lightweight Cryptographic Framework Based on Hybrid Cellular Automata for IoT Applications,” IEEE Access, vol. 12, pp. 192672–192688, 2024, https://doi.org/10.1109/ACCESS.2024.3519673.

- M. Imdad et al., “DNA-PRESENT: An Improved Security and Low-Latency, Lightweight Cryptographic Solution for IoT,” Sensors, vol. 24, no. 24, p. 7900, 2024, https://doi.org/10.3390/s24247900.

- M. Melhaoui et al., “Hybrid fuzzy logic approach for enhanced MPPT control in PV systems,” Scientific Reports, vol. 15, p. 19235, 2025, https://doi.org/10.1038/s41598-025-03154-w.

- S. Neelagiri, S. Babu, and S. Biradar, “A fuzzy logic approach to sustainable energy management in standalone microgrids,” International Journal of Applied Power Engineering, vol. 14, no. 4, pp. 999–1010, 2025, https://doi.org/10.11591/ijape.v14.i4.pp999-1010.

- T. Aljohani, “Intelligent Type-2 Fuzzy Logic Controller for Hybrid Microgrid Energy Management with Different Modes of EVs Integration,” Energies, vol. 17, no. 12, p. 2949, 2024, https://doi.org/10.3390/en17122949.

- F. Çakmak, Z. Aydoğmuş, and M. R. Tür, “Analyses of PO-Based Fuzzy Logic-Controlled MPPT and Incremental Conductance MPPT Algorithms in PV Systems,” Energies, vol. 18, no. 2, p. 233, 2025, https://doi.org/10.3390/en18020233.

- A. Fischer-Janzen, T. M. Wendt, and K. Van Laerhoven, “A scoping review of gaze and eye tracking-based control methods for assistive robotic arms,” Frontiers in Robotics and AI, vol. 11, p. 1326670, 2024, https://doi.org/10.3389/frobt.2024.1326670.

- H. R. Mahmood et al., “Eye Movement Classification using Feature Engineering and Ensemble Machine Learning,” Engineering, Technology & Applied Science Research, vol. 14, no. 6, pp. 18509–18517, 2024, https://doi.org/10.48084/etasr.9115.

- M. G. Kurian and Y. Chen, “Lightweight, Post-Quantum Secure Cryptography Based on Ascon: Hardware Implementation in Automotive Applications,” Electronics, vol. 13, no. 22, p. 4550, 2024, https://doi.org/10.3390/electronics13224550.

- A. Sharma, R. Suganya, P. B. Krishna, R. Raj, and R. K. Murugesan, “Network Efficient Hierarchical Authentication Algorithm for Secure Communication in IoT and IoE,” IEEE Access, vol. 12, pp. 195925–195938, 2024, https://doi.org/10.1109/ACCESS.2024.3516886.

- M. I. Guerra, F. M. de Araújo, J. T. de Carvalho Neto, and R. G. Vieira, “Survey on adaptative neural fuzzy inference system (ANFIS) architecture applied to photovoltaic systems,” Energy Systems, vol. 15, no. 2, pp. 505-541, 2024, https://doi.org/10.1007/s12667-022-00513-8.

- T. Wang, P. Zheng, S. Li, and L. Wang, “Multimodal human–robot interaction for human‐centric smart manufacturing: a survey,” Advanced Intelligent Systems, vol. 6, no. 3, p. 2300359, 2024, https://doi.org/10.1002/aisy.202300359.

- W. Robert, A. Denis, A. Thomas, A. Samuel, S. P. Kabiito, Z. Morish, and G. Ali, “A comprehensive review on cryptographic techniques for securing internet of medical things: A state-of-the-art, applications, security attacks, mitigation measures, and future research direction,” Mesopotamian Journal of Artificial Intelligence in Healthcare, vol. 2024, pp. 135-169, 2024, https://doi.org/10.58496/MJAIH/2024/016.

- K. -B. Park, S. H. Choi, J. Y. Lee, Y. Ghasemi, M. Mohammed and H. Jeong, "Hands-Free Human–Robot Interaction Using Multimodal Gestures and Deep Learning in Wearable Mixed Reality," in IEEE Access, vol. 9, pp. 55448-55464, 2021, https://doi.org/10.1109/ACCESS.2021.3071364.

- M. Hassan and E. Beshr, "Single-Rate Energy-Aware Motion Control for Solar-Assisted Mobile Robots in Agricultural Environments," 2025 IEEE International Conference on Green Energy and Smart Systems (GESS), pp. 1-5, 2025, https://doi.org/10.1109/GESS67704.2025.11297185.

- S. Hafeez et al., "Blockchain-Assisted UAV Communication Systems: A Comprehensive Survey," in IEEE Open Journal of Vehicular Technology, vol. 4, pp. 558-580, 2023, https://doi.org/10.1109/OJVT.2023.3295208.

- B. T. Sayed et al., “Real-time vision-based obstacle avoidance for mobile robots using lightweight monocular depth estimation and behavior-driven control,” Journal of the Brazilian Society of Mechanical Sciences and Engineering, vol. 47, no. 12, pp. 1-51, 2025, https://doi.org/10.1007/s40430-025-06053-3.

- J. Marchang and A. Di Nuovo, “Assistive multimodal robotic system (AMRSys): security and privacy issues, challenges, and possible solutions,” Applied Sciences, vol. 12, no. 4, p. 2174, 2022, https://doi.org/10.3390/app12042174.

- D. K. Sah, S. Almujaiwel, K. Cengiz and I. Alrashdi, "Energy-Efficient Task Allocation for IIoT Deep Learning Applications: An Embedded Edge Clusters Solution," in IEEE Internet of Things Journal, vol. 12, no. 17, pp. 34900-34909, 2025, https://doi.org/10.1109/JIOT.2025.3586469.

- D. Wang et al., "Gaze and Go: Harnessing Visual Attention Valence in Upper-Limb Robotic Rehabilitation With Tailored Gamification and Eye Tracking for Neuroplasticity," 2025 IEEE International Conference on Robotics and Automation (ICRA), pp. 9455-9461, 2025, https://doi.org/10.1109/ICRA55743.2025.11127634.

Abulfadhel Amer Saihood Altufaili (An Integrated and Lightweight Control Framework for Solar-Powered Assistive Robots: Combining Adaptive Fuzzy Energy Management, Multi-Modal HRI, and Secure Communication)